How Do Transformers Learn to Associate Tokens: Gradient Leading Terms Bring Mechanistic Interpretability

Review

| 닉네임 | 한줄평 | 별점 (0/5) |

|---|---|---|

| 눈물 | • 강점 : transformer의 블랙박스 동작 구조를 해석가능한 통계 관계 구조로 표현해 이론적인 가설이 실제 성능에 근사한지 실험으로 확인함. • 약점 : 초기 gradient 구조에 한해서만 분석했다는 점. 전체 구조를 파악하려면 leading term으로 제한됨. 하지만 실제 transformer는 매우 복잡한 구조기 때문에 이렇게 실험할 수 밖에 없었던 것 같음. (그럼에도 초기 구조를 잘 풀어내어 해석해낸 듯) • 보완점 : 초기 gradient의 해석만으로도 실제 구조에 근사함을 확인했으므로, full gradient의 해석까지 이끌어낸다면, transformer 전체를 해석할 수 있을지도.. | 3.3 |

| 피땀 | • 강점: 트랜스포머의 self attention에서 나타나는 패턴에 대해 분석하고 이걸 어떻게 하는지에 대한 연구동기가 탄탄함. 기존 연구에 비해 훨씬 실제와 비슷한 환경에서 실험을 진행함 • 단점 & 보완점: 학습 초기 단계에서만 가능하다고 했는데 좀 더 학습이 진행됐을 때의 실험 결과가 있으면 좋을듯 | 4.1 |

| 웃으면서 보자 | 장점: 트랜스포머 학습 과정을 수학 및 실험적으로 잘 해석해낸듯. 토큰 단위에서 풀어내는 연구가 꽤 많이 나오는 것 같은데, 그 계열에 잘 어울리는 논문이라고 생각함. 타이밍이 좋은 연구 단점: 토큰의 복잡설, 의미적 복잡성, 지식 특이성 등에 대한 고려 없이 묶어서 실험한점. 보완점: 토큰을 더 세분화하고 경향성 분석하는 것이 의미있어보임 | 3.7 |

| thumbs-up | • 장: transformer(사실상 모든 LLM)의 gradient 경향을 체계적으로 분석함. 특히 basis function 의 조합을 통해 모델의 의미 습득을 파헤침. • 단&보완: 왜 학습 초기에만 했을까? 어차피 몇번의 gradient descent를 진행하면 saturate되는 거 같긴 한데, 실험으로 한번 더 보여줬으면 좋았을듯! | 4.2 |

| 독수리오형제 | • 강점: 기존 연구들이 주로 학습이 끝난 뒤 나타난 결과를 분석하는 데 집중했다면, 이 논문은 '학습 중에 일어나는 생성 중 원리'를 건드리고자 함 • 약점: Closed-form 설명은 early-stage training에서 association이 “처음 어떻게 형성되는지”를 설명하는 데 초점이 있음. 그래서 학습이 깊어졌을 때 같은 설명력이 유지되는지는 별개의 문제인거같다 • 보완점: 이 논문은 token간의 association을 다루는데, 상위 수준 reasoning 매커니즘으로도 확장 가능해 보임 | 3.7 |

| 삐질 | • 강점: 기존 interpretability 연구들이 “결과적으로 무엇이 학습됐는가”를 분석했다면, 이 논문은 “어떻게 학습이 시작되는가”를 수학적으로 이끌어냄. • 약점: Semantic association을 주로 co-occurrence과 같은 단순 통계로 해석하는데, 복잡한 reasoning에도 이 프레임워크를 적용할 수 있을까 생각이 듦. • 보완점: 학습이 진행될수록 high-order term이 바뀌는 임계점을 파악해서 복잡한 reasoning의 발생시점을 해석 | 3.9 |

| 팝콘 | • 장점: 최대한 실제와 유사한 설정에서 LLM 분석. 3개의 주요 basis function으로 학습 가중치 설명 • 단점 & 보완점: Figure6 포함해서, 보다 큰 모델 분석한 결과가 있었으면 더 현실적이었을듯 | 3.7 |

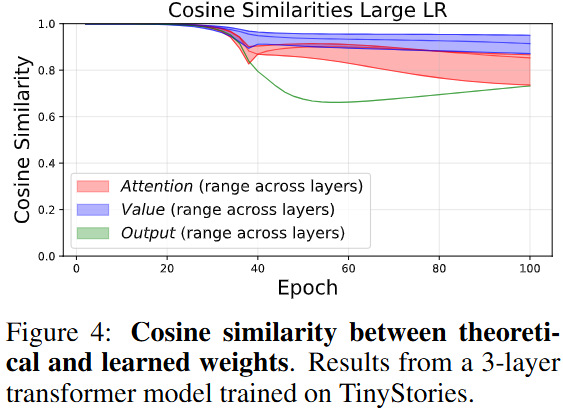

| 파이어 | • 장점: Transformer의 가중치 구조를 해석 가능하도록 하여 학습 과정 중의 원리를 밝혀낸 것. • 단점: 학습 후반부에 대한 결과가 없으며, Cosine Simliarity가 낮아지는 이유가 무엇인지? • 보완: 보다 복잡한 모델에 대한 실험 결과도 있어야 함. | 3.4 |

| 덩쿠림보 | 굉장히 novel하고 sound한데 이해를 잘 못하겠음 ㅜㅜ 어텐션의 학습을 해석한 것은 좋으나, MLP 레이어와의 관계까지 봤으면 LLM의 이해에 도움되지 않았을까 싶음. 인사이트 기반으로 활용가능성은 커보임! | 4 |

| 초콜릿 | • 장점: 트랜스포머가 학습 초기에 3가지 basis function의 조합으로 의미 관계를 형성한다는 걸 수학적으로 유도하고 실험으로 확인함 • 약점: 분석이 학습 초기 단계에만 한정되어 있어서, 모델이 학습된 이후에도 같은 구조가 유지되는지 알 수 없을듯 • 보완점: 토큰을 세분화해서 각 basis function이 어떤 유형의 토큰에서 더 강하게 나타나는지 볼 수 있으면 어떨까 | 3.8 |

TL; DR

💡

트랜스포머는 학습 초기에 3가지 방식의 통계 구조를 가중치에 직접 반영하며, 이들의 조합만으로 의미적 관계와 어텐션이 형성됨

Summary

- 연구진: 위스콘신 대학, 시드니 공과대학

- 인용수 : 2

연구 동기

- Self-attention 기반 LLM은 factual 지식과 word knowledge를 모두 학습

⇒ 모델 내부에서 어떤 구조가 만들어지고, 어떻게 학습되는걸까?

⇒ 아래와 같은 패턴 발견- induction heads (패턴 복사)

- linear semantic relations (선형 의미 관계)

- topic clustering (주제별 묶임)

- 결론: Semantic association (단어들 간 의미적 연결)이 LLM의 핵심 능력이다!

- 정의: 토큰들 사이의 통계적(얼마나 자주?) + 기능적(문장 안에서 비슷한 역할을 하는지?) 관계

- bird ↔ flew ⇒ 같이 자주 등장

- car ↔ truck ⇒ 서로 대체하기 쉬움

- country ↔capital ⇒ 비슷한 의미로 묶임

→ 이런 의미적 연관성이 있어야 문장 생성과 일반화가 가능

- 정의: 토큰들 사이의 통계적(얼마나 자주?) + 기능적(문장 안에서 비슷한 역할을 하는지?) 관계

“그렇다면 트랜스포머는 어떻게 단어 간 의미적 연관(semantic association)을 학습하는가?”

⇒ gradient descent 최적화로부터 자연스럽게 드러남

⇒ 이러한 구조가 학습 시 어떻게 만들어지는지 파악하기 위한 연구들이 진행됨

기존 연구의 한계

트랜스포머의 학습 방식이 매우 복잡해서, 기존 연구는 다음과 같은 비현실적인 가정을 채택

- Synthetic structured language

- 반복적인 패턴 or 문법이 완전히 규칙적인 toy language

- 실제 자연어와 괴리 큼

- Positional encoding이나 residual connection이 제거된 단순화된 모델 구조

- 자연어는 단어의 순서가 중요한데, 순서 정보 전달 기능을 상실함 → bag-of-words처럼 행동

- Residual connection 없애면 초기 feature 유지가 안되고 gradient 흐름이 깨짐

- 비현실적인 학습 방식

- end-to-end가 아닌 component별 학습 / 일부 가중치를 freeze

- 실제 LLM 학습 방식과 다름 (gradient 자체가 전체 network를 통해 전달되기 때문)

제안 아이디어

- 학습 초기 gradient leading term (주요 항) 분석을 통해 트랜스포머 가중치를 수학적으로 해석하자!

- 트랜스포머는 의미 관계 학습을 초반부에 대부분 진행되고 이후에도 유지되기 때문

- 초기에는 gradient가 단순해서 가중치를 closed-form으로 근사 가능

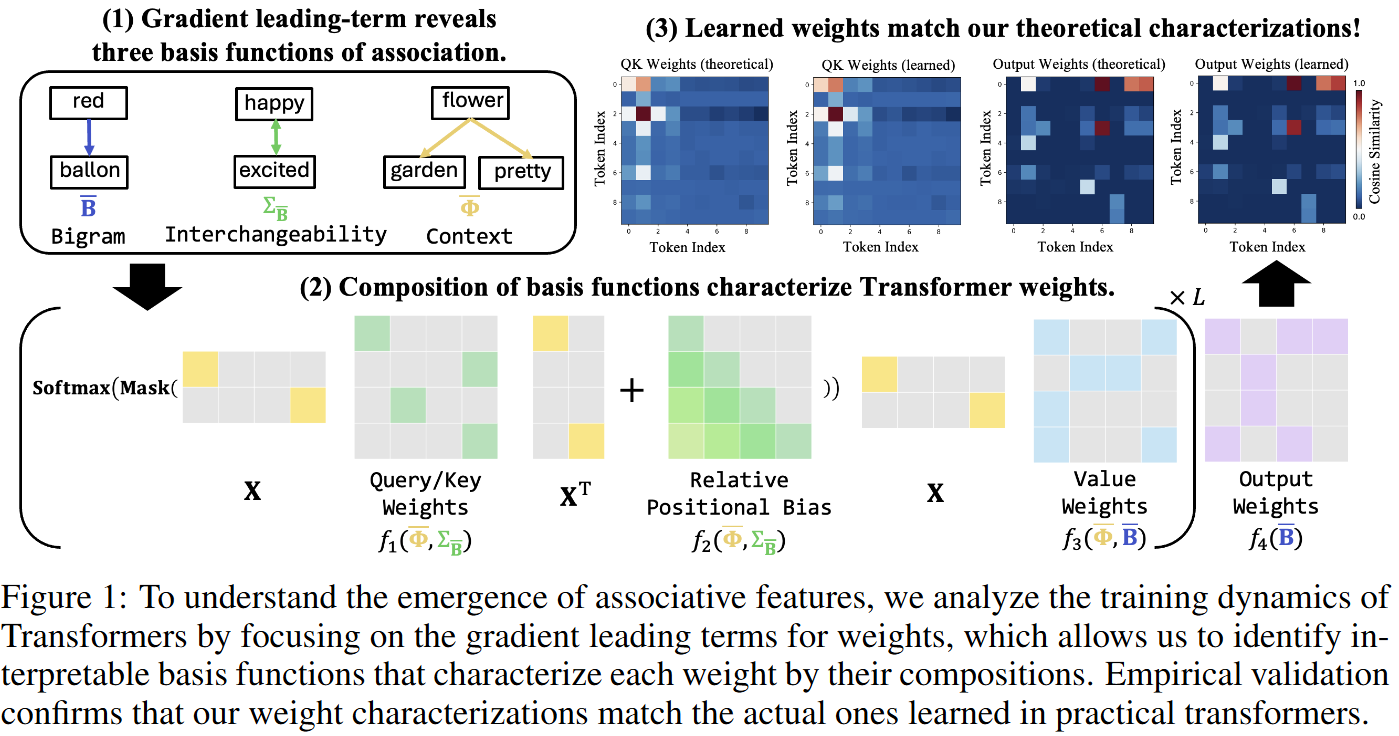

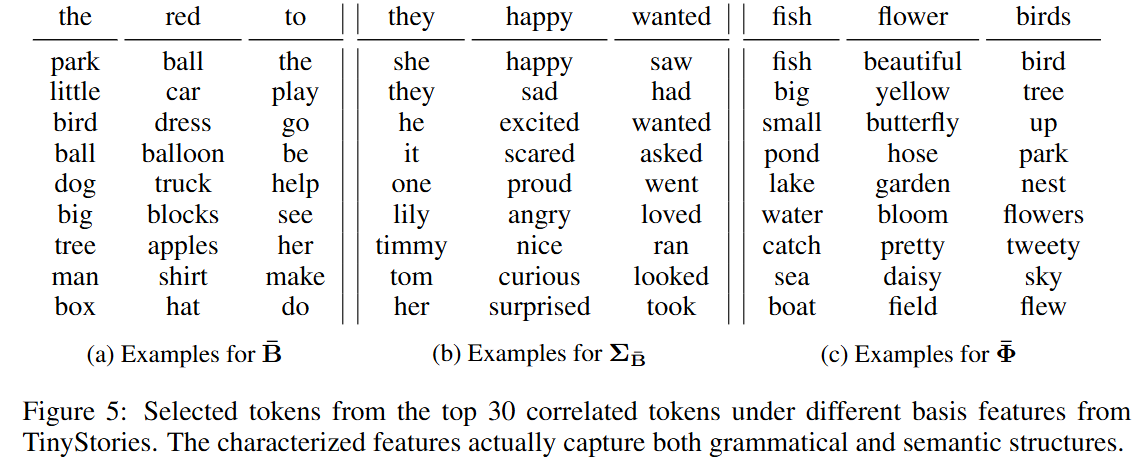

⇒ 발견: 학습된 가중치는 단순히 co-occurence가 아닌, 3가지 basis function의 조합으로 표현된다!

basis function이란?

- 의미를 구성하는 가장 기본적인 변환 단위

⇒ 토큰 → 다른 토큰들과의 관계로 바꿔주는 규칙 / 함수

- e.g., "fish" → [pond, water, lake, swim, ...]

- 의미를 구성하는 가장 기본적인 변환 단위

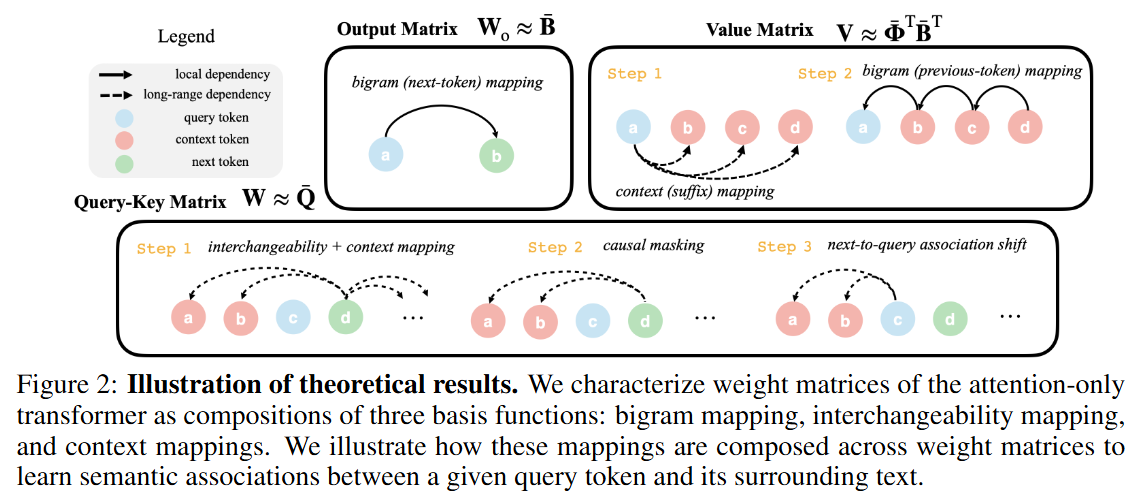

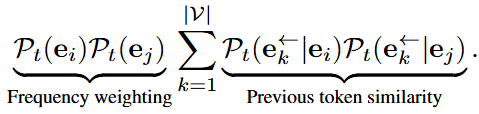

- Bigram mapping : 인접 토큰 간 의존관계

- Interchangeability mapping: 토큰 간 유사도 반영 (e.g., 유의어, 문법적 역할)

- Context mapping: 고차 의미 관계 (long-range)

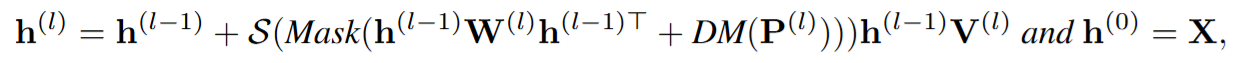

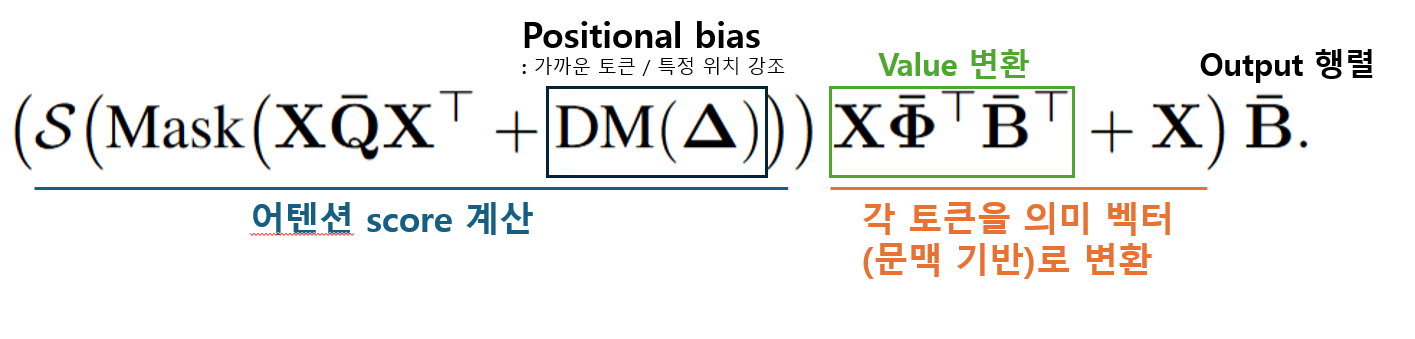

Theoretical Analysis

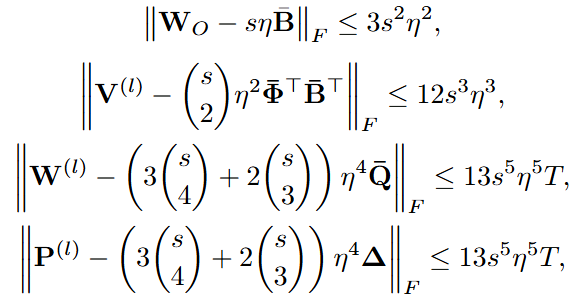

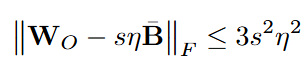

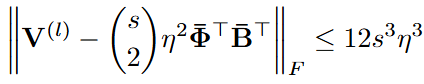

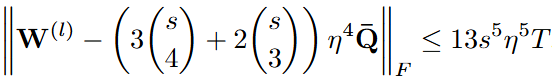

어텐션 기반 트랜스포머의 가중치가 gradient의 가장 중요한 부분(leading term)으로 대부분 설명됨

- 작은 초기값(Gaussian initialization)에서 시작해서, learning rate와 step 수가 너무 크지 않은 초기 학습 구간 가정

⇒ 모든 layer가 동일한 구조를 배운다!

3가지 basis function

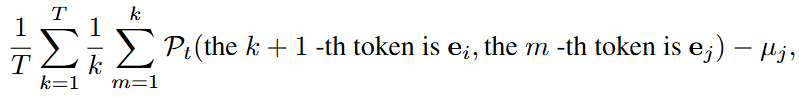

Bigram mapping

-

- : 토큰 가 전체 데이터에서 얼마나 자주 나오는지

- 첫번째 항 : i→j로의 등장 확률

- 두번째 항: random하게 다음 토큰이 등장할 확률

⇒ 토큰 뒤에 토큰 가 얼마나 자주 오는지를 기반으로 두 토큰 사이의 “연관성”을 나타냄

-

트랜스포머 각 가중치 행렬이 무슨 의미를 가지나? (데이터 통계 관점)

Experiments

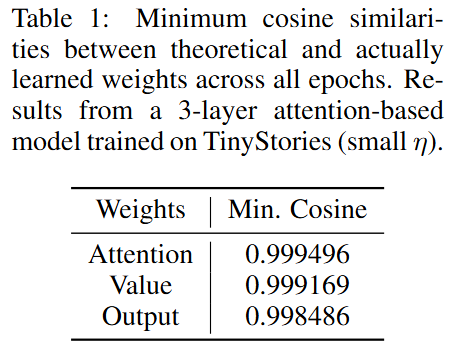

실험 개요

- 실험 목표: 이론이 실제로 맞는지 검증하고, 가중치 안에 의미적 구조가 실제로 존재하는지 확인

- 가중치가 leading term으로 근사가 되는지?

- 3가지 basis function 구조가 실제로 나타나는지?

- 데이터셋: TinyStories

- 행렬이 크면 분석이 어렵기에 3000 단어로 제한

- 실험 목표: 이론이 실제로 맞는지 검증하고, 가중치 안에 의미적 구조가 실제로 존재하는지 확인

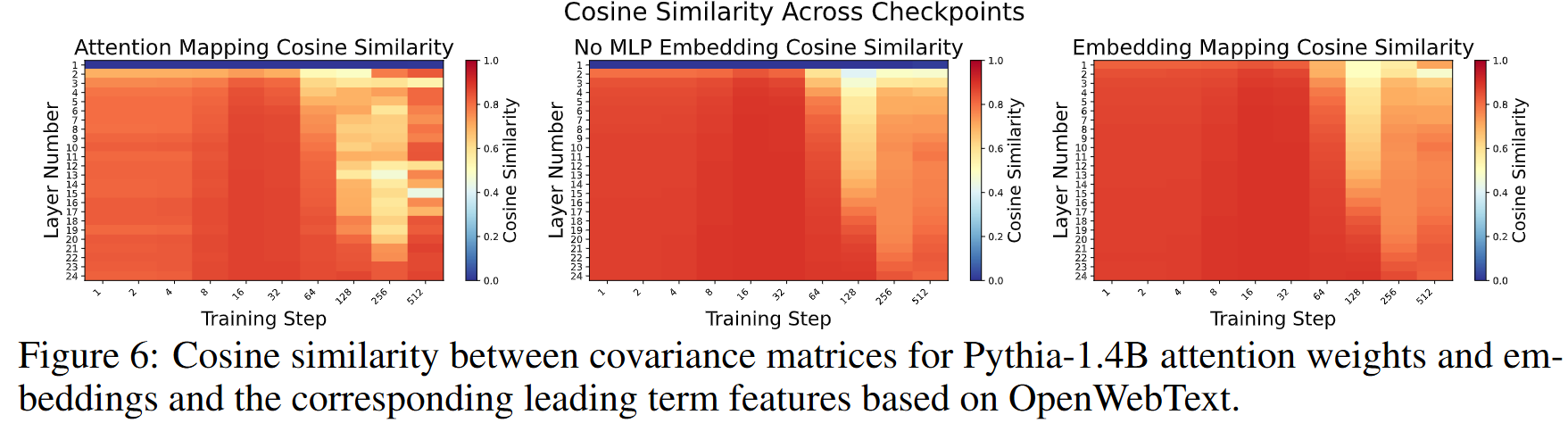

실험 결과 3: 실제 LLM에서도 적용 가능한 지 검증

- Pythia-1.4B 활용 (학습 중간마다 checkpoint 제공해서 layer 별 분석 가능)

- 하지만 실제 LLM은 MLP, multi-head attention 등 추가적인 component를 포함하기에 가중치 해석이 불가

⇒ 가중치를 직접 보지 말고 임베딩 기반으로 토큰 간 상관관계를 간접적으로 추출하자!

- 트랜스포머에 각 토큰을 input으로 부여

- Layer 통과 전 임베딩, Layer 통과 후 임베딩, 어텐션 통과 후 임베딩 각각 계산

- 이를 기반으로 임베딩 행렬 구성

⇒ 근데 이론에서는 토큰 간 상관관계인데, 실제로는 토큰 ↔ 임베딩 간 상관관계라서 직접 비교가 어려움

1. Leading term 계산 (OpenWebTest 데이터에서 실제 통계 계산)- 정규화 후 covariance 행렬 계산 (임베딩을 공분산으로 변환하면 토큰 행렬로 변환됨)

실제 LLM도 초기에는 이론과 거의 동일하게 학습되며,

이 구조를 기반으로 점점 더 복잡한 표현을 쌓아감- 첫번째 레이어는 아직 충분한 context가 없기에 어텐션에 대한 코사인 유사도는 낮음

- MLP 레이어는 임베딩 공간을 형성하는 역할 수행

- 이후에는 어텐션 영향이 커짐

- 이후 점점 복잡한 context를 배우면서 유사도 상승

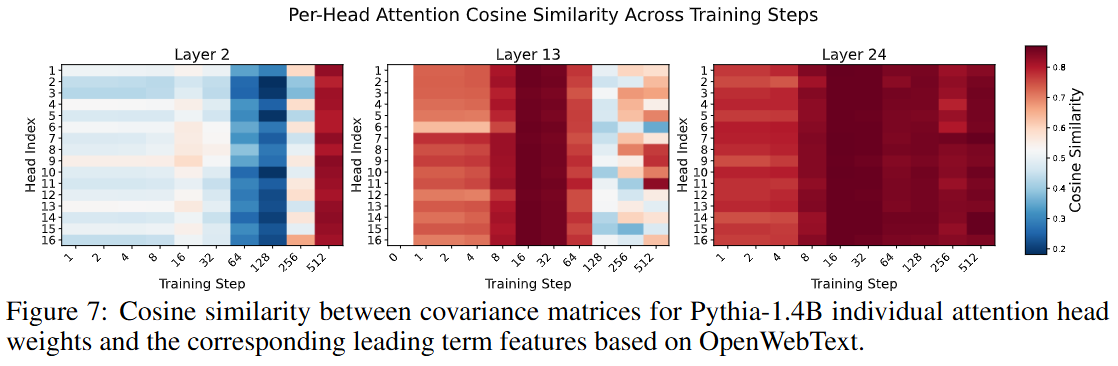

“각 어텐션 head가 이론적으로 계산된 구조와 얼마나 닮았을까?”

- 초기 레이어는 semantic association 늦게 학습 → 아직 코사인 유사도는 낮음

- 중간 레이어에서 head들이 서로 다른 역할로 나뉘기 시작

- 말단 레이어는 variance 감소 → 각 head의 역할이 명확히 구분됨