On the Role of Attention Heads in Large Language Model Safety

Review

| 닉네임 | 한줄평 | 별점 (0/5) |

|---|---|---|

| MNG | attention head 차이로 안전성이 무너지는 것은 LLM이 가진 특성일 것 같음. head를 분석하는 방법은 다른 연구에서도 참고하면 좋을 것 같음! | 3 |

| 오차즈케 | 다른 연구에서는 특정 head가 출력에 대한 혼동을 유발한다고 하여 비활성화해야한다고 주장하는데, 이 논문에서는 안정성을 담당하는 head가 존재한다고 말하고 있어 주장이 완전 상충되어서 흥미로운 것 같음. 앞서 말한 상황처럼 특정 head를 껐을 때 안전성과 무관한 다른 기능에 어떤 부작용이 발생하는지 더 분석이 있으면 좋을 것 같음 | 4 |

| 방어냠냠 | attention 만든 사람 진짜 천재 아녀? safety 뿐 아니라 언어모델링의 다양한 이슈들을 attention head로 확장해볼 수 있겠다 싶었음 | 3.8 |

| 42REN | Attention Head를 통해 안전성 및 해석 가능성을 평가할 수 있다는 점이 흥미로웠음. 한편으로는 Head를 끄면 Token Selection이 저하된다고 하는데 중요한 토큰을 선택하는 능력이 떨어지는 측면이 있는건 아닐지? | 4.5 |

| 야키토리 | safety가 소수의 attention head에 집중되어 있었다는 점을 생각해본 적이 없었는데 지금 생각해보니 트랜스포머 특성 상 그럴 것 같다! 소수의 head만 조작해도 안전성이 쉽게 무너지는 취약성을 실제 실험으로 보여준 점이 인상 깊다 | 4.5 |

| 텀블러 | Attention head 연구로서는 좋은 인사이트를 주지만, 실제 adversarial attack 연구 관점에서는, 오픈 소스 모델에서만 공격 가능하기 때문에 큰 범용성을 갖기는 어렵다고 생각. 물론 llama를 공격하는 것도 좋지만, ChatGPT나 Claude도 공격할 수 있는 프롬프팅 기반 red teaming 방식이 주류인 이유가 있는 듯. Novelty는 아주 좋음 (모두가 이거 한 번쯤 생각해보고 시도는 안해본 연구 느낌) | 4.3 |

| 감자 | attention head를 탐구하는데 safety 관점에서 하고, 관련 실험도 다양하게 한 논문. 꼭 safety에만 쓸 수 있는 방법은 아니어보여서 다양하게 쓸 수 있어 보인다 | 4 |

| 새우 | 각 head 제거는 경험적이지만 motivation 자체가 좋음. 실제로 안전성을 지키는 최소 단위의 head group을 찾는 방법을 제안한 점이 인상적임 | 4 |

TL; DR

LLM 안전성은 사실 소수의 attention head 에 집중되어 있어서, 그 head들만 살짝 꺼도 🚨 안정성이 바로 무너진다는 걸 밝힘 🔍 Ships·Sahara로 어떤 head가 진짜 safety 담당인지 찾아내는 방법을 제안함 ⚙️🔥

Summary

- 연구진: 알리바바, 중국과학기술대학, 칭화대학교, 난양이공대학교

Main Idea

standard attention mechanism 와 safety capability 간의 관련성을 찾아, safety에 관한 interpretability를 탐구하자 !

Background & Motivation

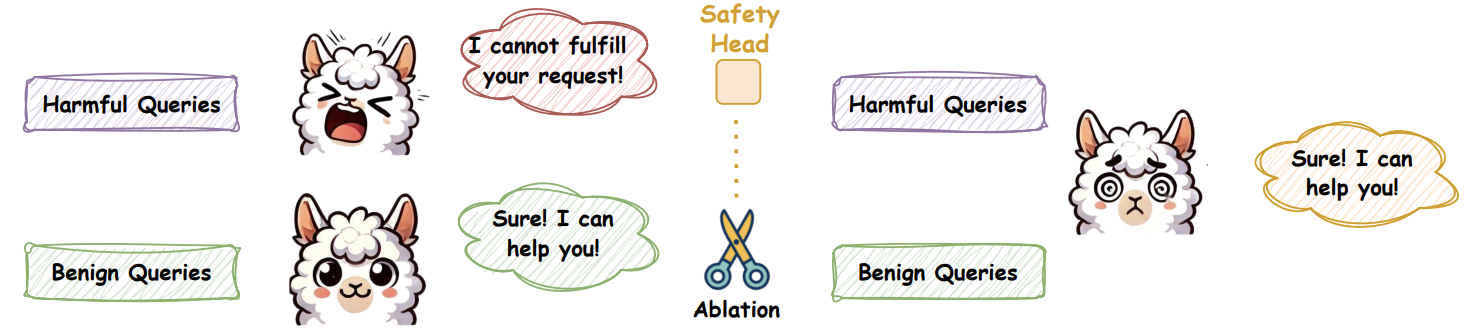

- LLM의 safety

- harmful query에 대해 답변을 거절하도록 alignment 되어 있음 (그림 왼쪽)

- e.g. ‘I cannot’ or ‘As a responsible AI assistant’ 등의 rejection token 사용

- but, 특정 token의 확률분포를 조정하면 (

Jailbreak Attack) safety에 취약해져서 harmful query에도 답변하게 됨 (그림 오른쪽)- ‘I cannot’, ‘As a responsible AI assistant’ 등의 rejection token을 낮추거나

- “Sure”, “Here is…” 등의 affirmative tokens을 높히는 것

- harmful query에 대해 답변을 거절하도록 alignment 되어 있음 (그림 왼쪽)

- 기존 LLM safety 관련 논문은 주로 features, neurons, layers, parameters 관점에서 수행됨

- e.g. 어떤 neuron이 safety를 담당하는지

⇒ multi-head attention (MHA) 관점에서 safety를 분석해보자!

즉, safety에 가장 영향이 큰 head(=safety parameters)를 정량적으로 찾아보자!

- Why MHA?

: input sequence에서 feature를 포착하는 데 중요한 역할을 하기 때문

Contributions (What they’ve revealed)

⚙️ Settings

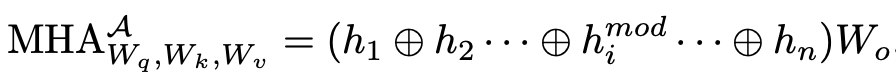

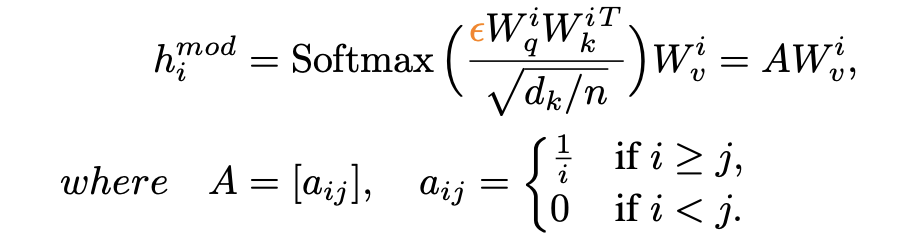

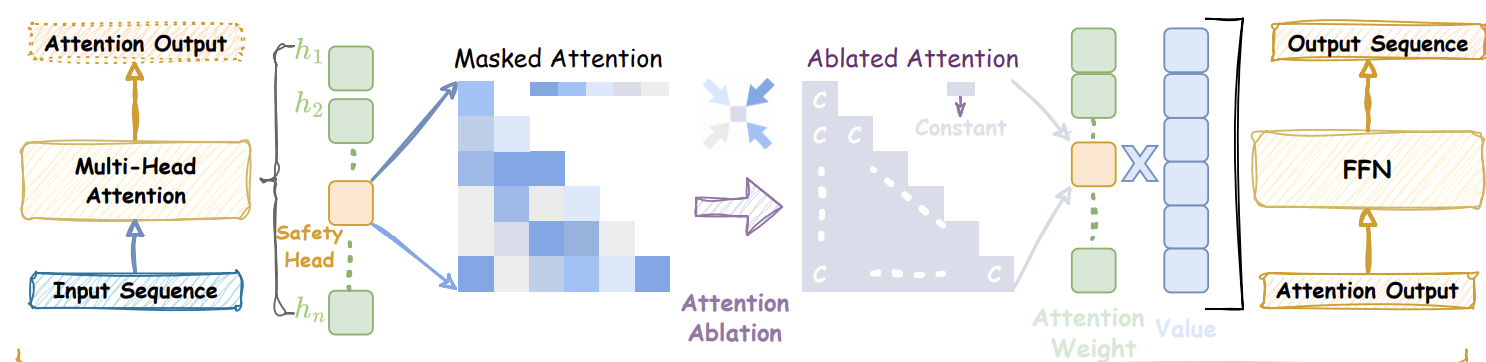

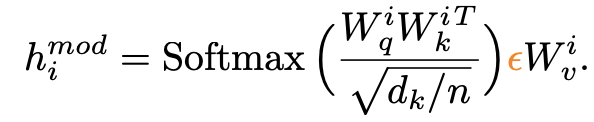

- head ablation에 용이하도록 수정된 modified multi-head attention 사용

annotations

- : Query, Key, and Value matrices

- : i번째 attention head

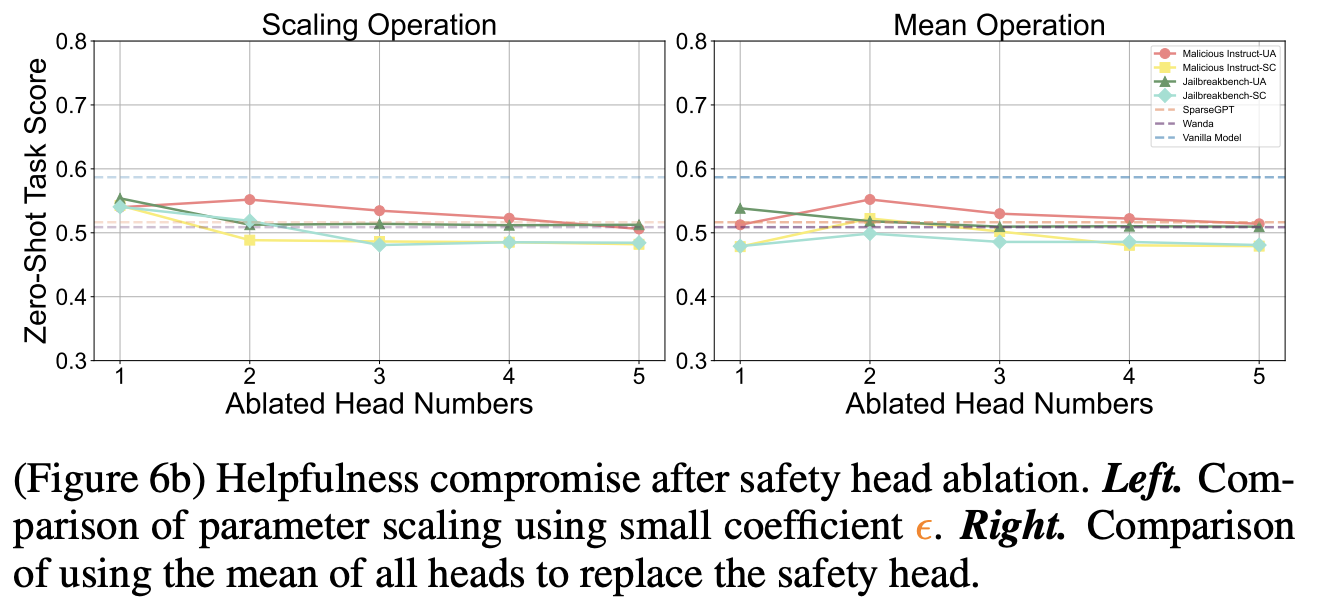

- 직관적으로 head ablation == 해당 head의 output을 0으로 두는 것이지만, 논문에서는 두가지 방법을 사용해 head ablation 수행

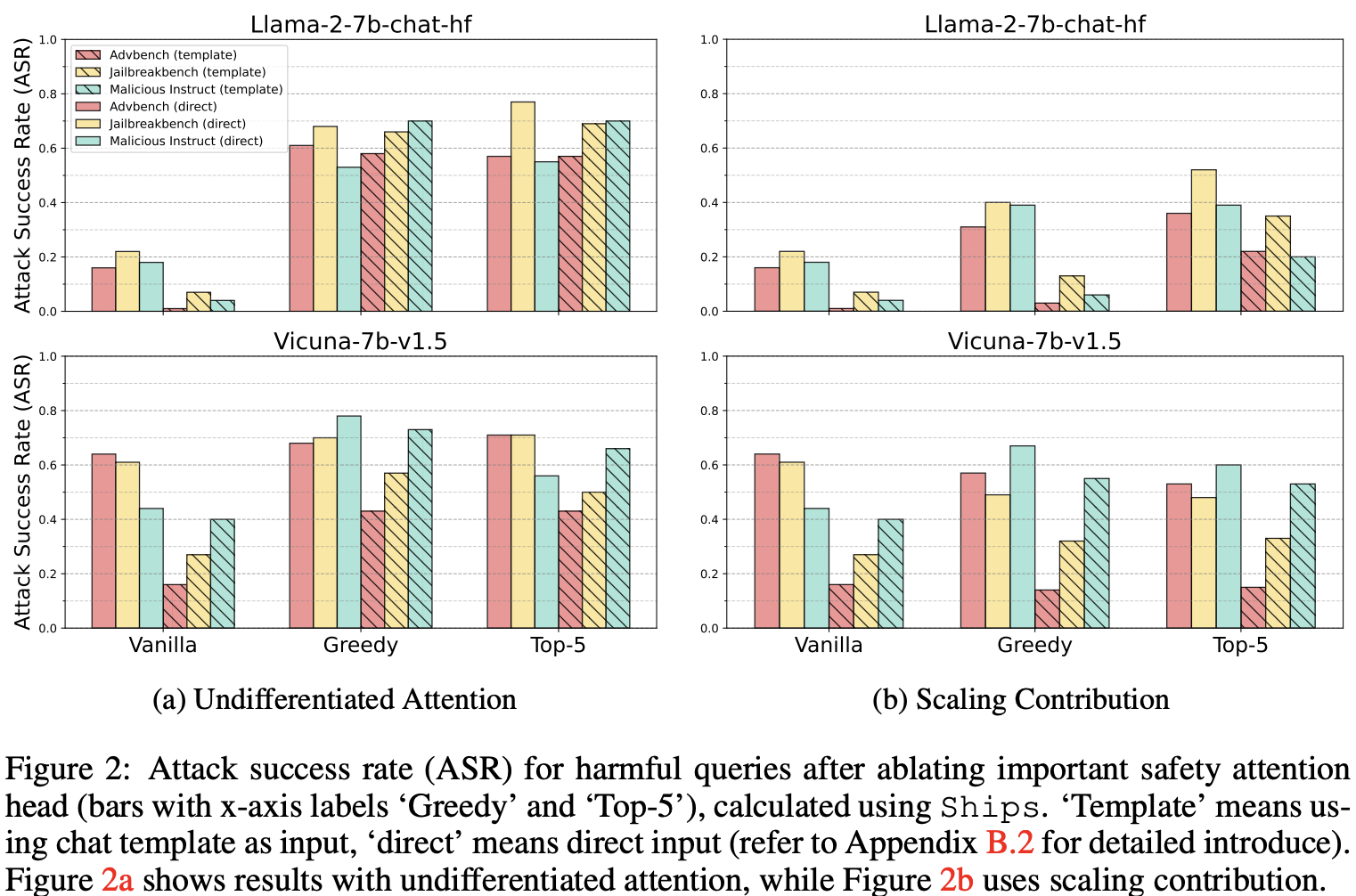

- Undifferentiated Attention: Q 혹은 K(또는 둘 다)에 아주 작은 계수 ε를 곱해, 해당 head의 attention weight가 모든 토큰에 대해 거의 균일(평균)하게 되도록 만듦

⇒ 즉, 특정 head에서 어떤 token이 중요한지 판단하는 token selection 기능을 저하시킴

이 그림에서, 모든 token이 다 비슷한 색이 됨 !

- Undifferentiated Attention: Q 혹은 K(또는 둘 다)에 아주 작은 계수 ε를 곱해, 해당 head의 attention weight가 모든 토큰에 대해 거의 균일(평균)하게 되도록 만듦

- backbone LLM: Llama-2-7b-chat, Vicuna-7b-v1.5

- dataset: Advbench, Jailbreakbench, Malicious Instruct

- decoding setting

- vanilla: original decoding

- greedy: greedy decoding

- top5: top-5 sampling decoding

- head ablation에 용이하도록 수정된 modified multi-head attention 사용

1. Safety interpretability 연구를 위해, 처음으로 Safety-specific attention head의 존재를 밝힘

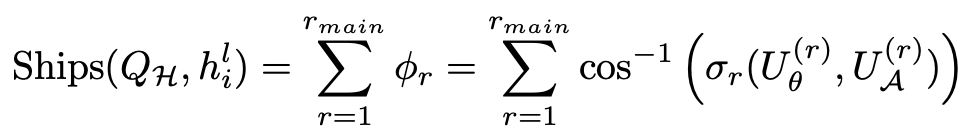

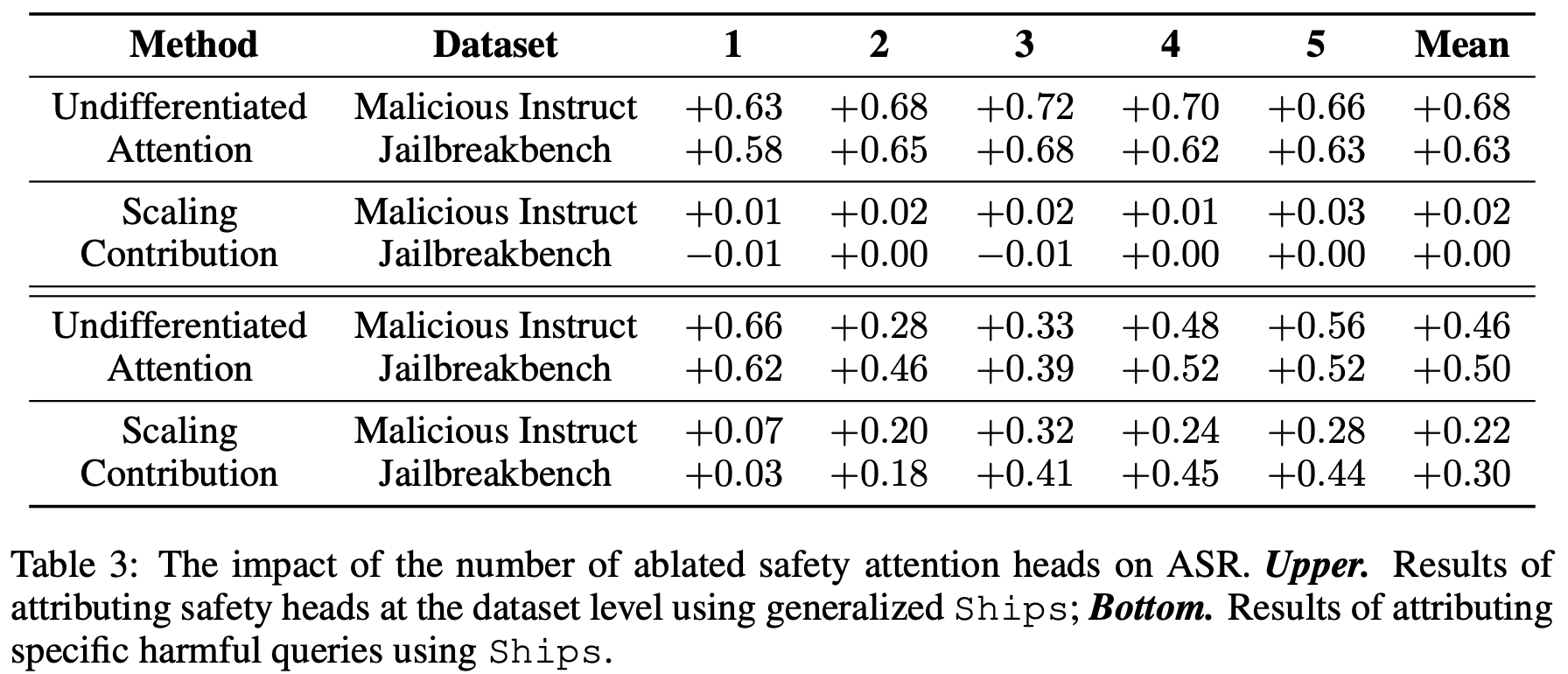

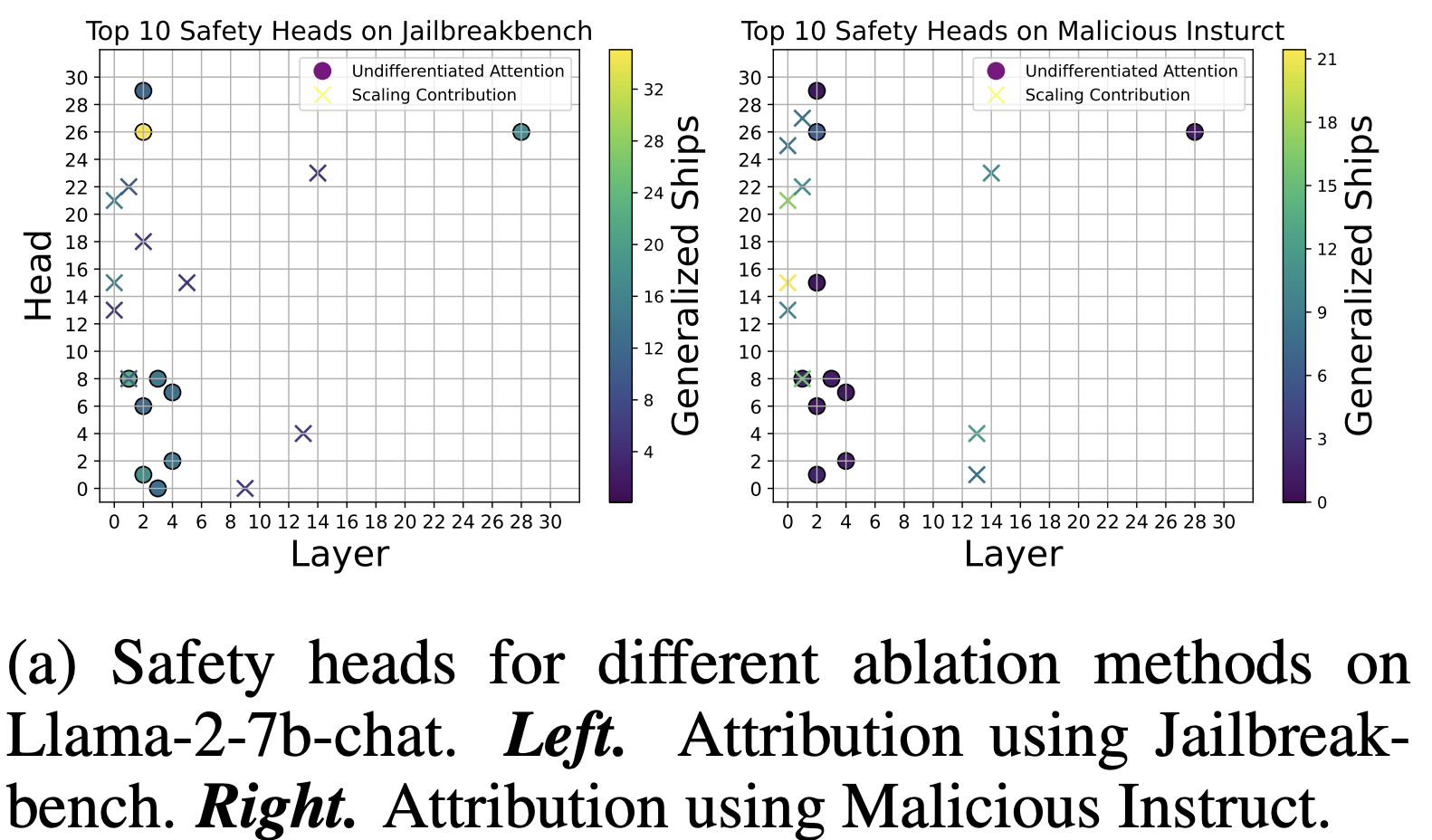

: 두가지 방법 (undifferentiated attention, scaling contribution)으로 safety-specific attention head (즉 highest ship score를 가지는 head)를 제거해 본 결과

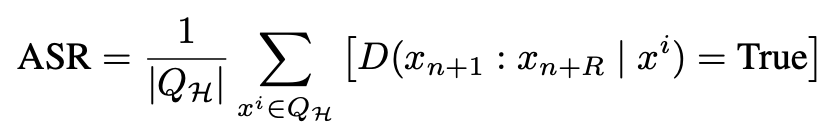

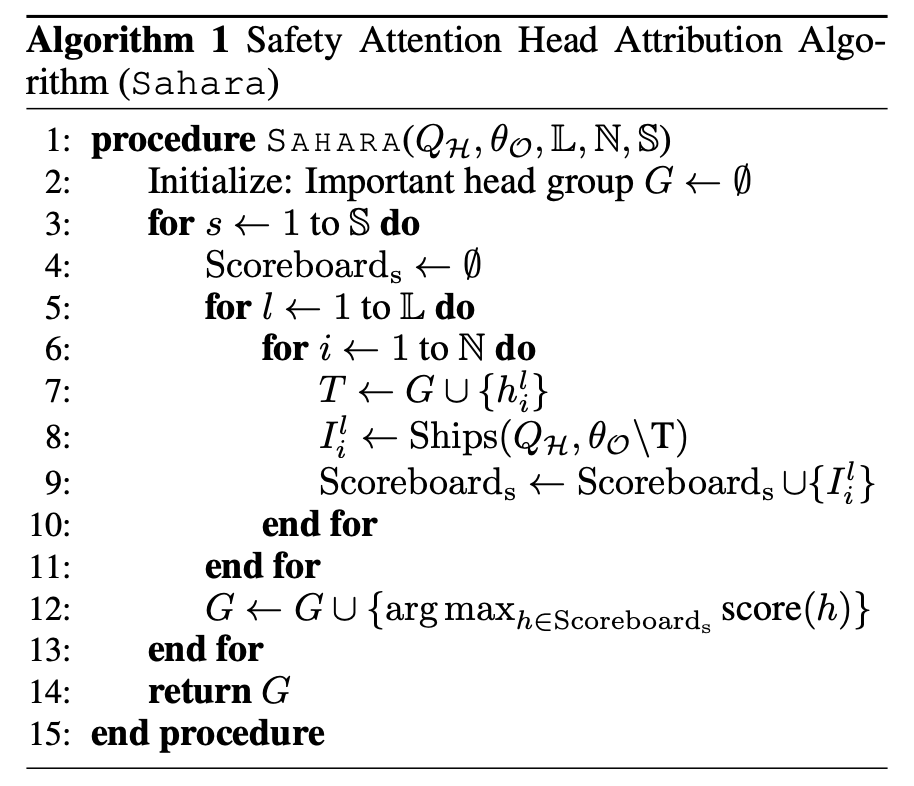

2. attention head의 safety impact를 보이기 위해 SHIP (Safety Head ImPortant) Score & SAHARA (Safety Attention Head Attribution Algorithm)제안

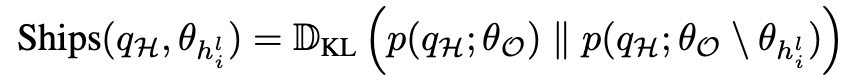

SHIP score란?- 각 attention head가 safety에 얼마나 중요한지를 나타내는 점수

annotations

- qH: 거절해야 하는 harmful query

- : target attention head

- : original model parameters

- : Kullback-Leibler divergence

- : original model에서 target attention head를 제거한 모델

⇒ safety-critical attention head를 찾기 위해 generalized된 버전의 SHIP score

즉, dataset 레벨로 attention head ablation 수행

- how to make?

- harmful query dataset 의 top layer activation a를 쌓아 matrix M 구성

- Singular Value Decomposition 을 통해 left singular matrix 얻기

- attention head 이 ablation된 model에서 얻기

⇒ 2와 3 사이의 principal angle이 클수록 safety에 관여가 큼에 착안함

annotations

- : r-th singular value

SAHARA란?

3. LLM safety 관점에서 standard multi-head attention mechanism의 중요성을 분석함으로서, LLM risk에 대한 우려를 완화하고, transperency에 기여

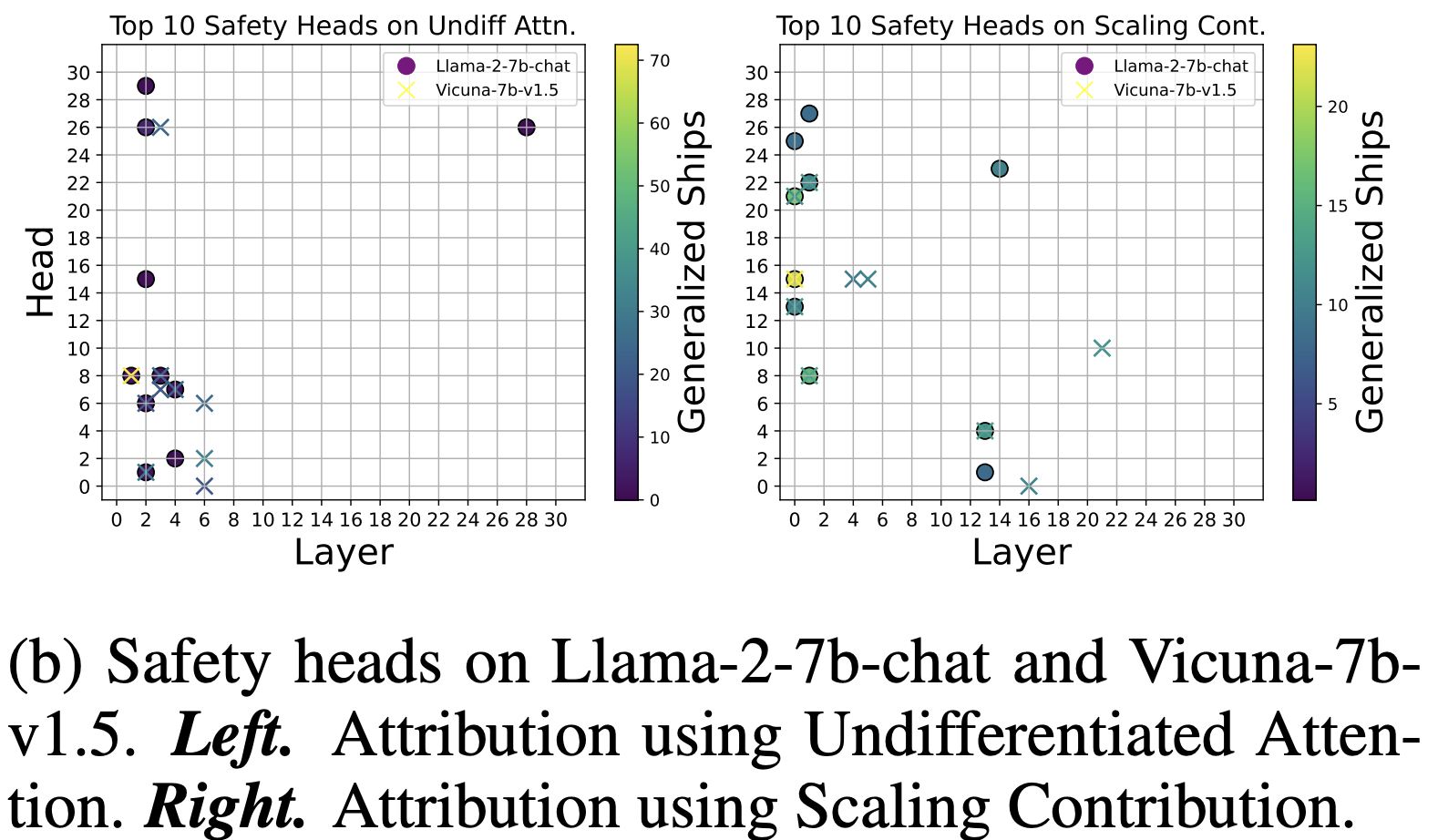

- vicuna와 llama에서의 safety head는 얼마나 겹칠까?

⇒ Safety head들은 pre-training 단계에서 이미 상당량 형성되어 있고, 이후 chat-tuning(Vicuna-style instruction-tuning)에서도 그대로 유지되는 것

- vicuna와 llama에서의 safety head는 얼마나 겹칠까?