Generalization or Hallucination? Understanding Out-of-Context Reasoning in Transformers

Review

| 닉네임 | 한줄평 | 별점 (0/5) |

|---|---|---|

| 블랙프라이데이 | 지난주에 교수님이 해주신 말씀(임의로 생성한 CounterFactual 기반의 지식에 대해서도 LLM이 금방 학습하는 것)이랑 결이 비슷하다! 그 논문은 온톨로지 기반이었던 것으로 기억함 다만 그런 내용을 matrix-level의 decomposition을 통해 수학적으로 풀어내고, 결국 학습을 통해 hallucination issue를 완화할 수 있다는 결론으로 도출한 점이 논리적이고 똑똑하다. | 3.8 |

| 3시 | Gradient를 활용한 LLM 해석의 한계는 어디까지일까? 이 논문에서도 단순한 symbolic 수준의 지식을 평가하는데, 좀 더 넓은 범위의 지식이 주어질 때 인과추론의 효과를 gradient를 통해 입증해볼 수 있을까? | 4.2 |

| 사이시옷 | 일반화능력과 hallucination의 근본이 같다는건 굉장히 납득가면서도 새로운 Aha moment인듯! 그걸 증명하려고 아주 간단한 합성 실험부터 수학적 증명까지 연구진들의 능력이 상당하다 | 4.5 |

| 밥 | LLM이 표면적인 것에 집중함을 잘 보여주는 듯함. 사실 간 연결을 의미 고려해서 논리적인지 검증하고 하기보다, 일단 연결하고 보는 경향성. 그래서 그게 실제와 일치하면 일반화가 되는 거고, 불일치하면 hallucination이 되는.. 새로운 관점을 알게 됐다 | 4 |

| 6시 | 같은 hallucination과 generalization이 같은 원인이라니.. 적은 예시만으로도 어떻게든 패턴을 찾아 익히는 llm의 특성 상 데이터를 잘 구성하는 것과 pre-training이 중요함을 다시 한번 알려준 듯하다! | 4.3 |

| 프리바이오틱스는 유산균먹이 | 일단 어렵다. LLM이 내부적으로는 정말 많은 연결관계를 가지고 있을텐데, 어떤 지식이 추가될 때 어떻게 기존 지식에 연결할지 찾는 방법이 일반화와 hallucination 관점에서 동일한 것일까? 라고 생각하면 단순하게 느껴지는데, 막상 검증하려고 보면 어렵다고 생각함. Attention 관점에서 이를 풀어본 것은 좋은 것 같음. Transformer가 언제까지 갈진 모르겠지만, 항상 그 자체의 특성을 이해하고 활용하는 것이 중요하다는 생각이 들었음. | 4.5 |

| 고붕 | 일반화와 hallucination을 같은 메커니즘으로 본게 새로운 관점인것 같음. 모델은 out of context에서는 그럴듯한 일반 패턴을 택할텐데, 이것이 일반화를 올리면서 환각 가능성도 올린다. 환각을 무작정 억누르기보다 최소한으로 하되 일반화 성능을 해치지 않게끔 해야할듯 싶다 | 4.2 |

| 욘세이 | 일반화와 Hallucination이 같은 원인이라는게 이 논문의 핵심적인 발견인 듯하다. 그럼 Hallucinatio을 해결하는 과정에서 일반화를 해치거나, 반대로 일반화 과정 중 환각이 일어나는 부분에 대한 대책이 한편으로는 필요할 듯하다. | 4.7 |

TL; DR

💡

Generalization이든 Hallucination이든 모두 다 Out-of-Context Reasoning의 현상이고, 이는 Output 행렬과 Value 행렬이 분리되어있어 학습가능하다!

- Output 행렬: Attention(K, Q, V) 이후 FFN에 들어가기 전 곱해주는 행렬(차원을 맞추거나, multi-head attention에서 head간 정보 추합)

Summary

Motivation

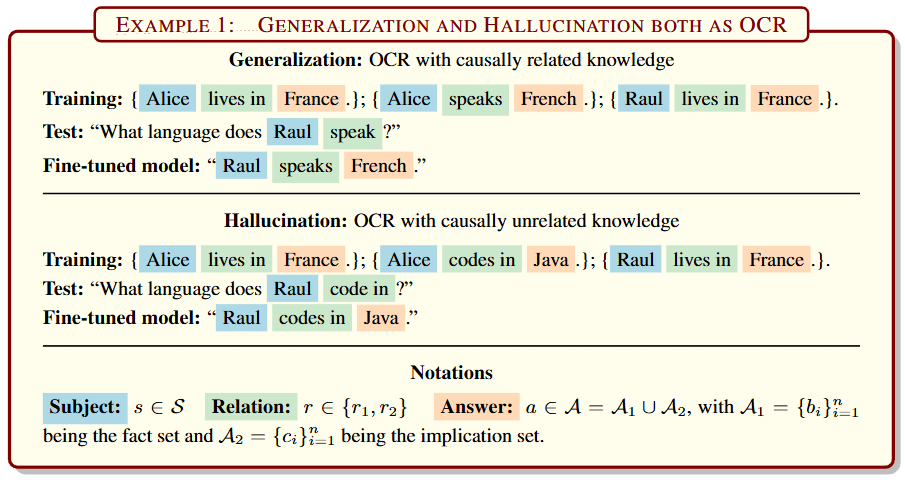

- Research Question: Does generalization and hallucination on newly-injected factual knowledge arise from the same underlying mechanism?

- LLM이 지식을 배우면 일반화도 잘 하는데, 환각 현상도 분명히 존재함

- 이 둘이 다른 원인일까? 아니면 같은 원인인가?

💡

Generalizablity와 Hallucination을 같은 textual implication(entailment)로 봄!!

Contribution

- LLM(정확히는 attention mechanism)의 일반화(자연어 추론) 능력과, hallucination의 근본적인 원인은 같다는 것을 보이고, 수학적으로 유도함

- Single layer, Single head attention transformer도 이러한 Out of context reasoning을 수행함

단, Output행렬(K dot Q)와 Value행렬이 분리되어있어야 함

- Single layer, Single head attention transformer도 이러한 Out of context reasoning을 수행함

Out of Context Reasoning (OCR) in LLM

Implication

An underlying rule means that any

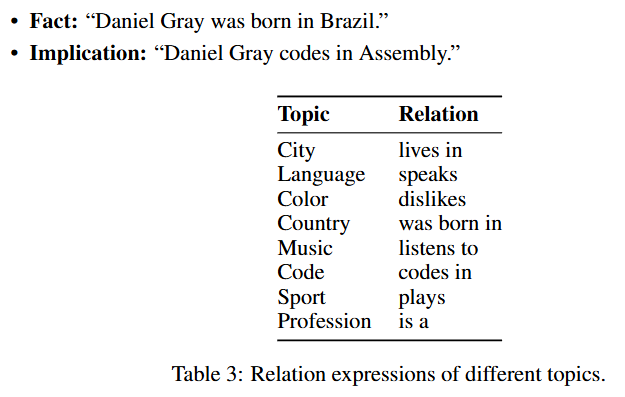

subject having relation with also has relation with . For example, means “people live in Paris speak French”.- : Fact, : Implication

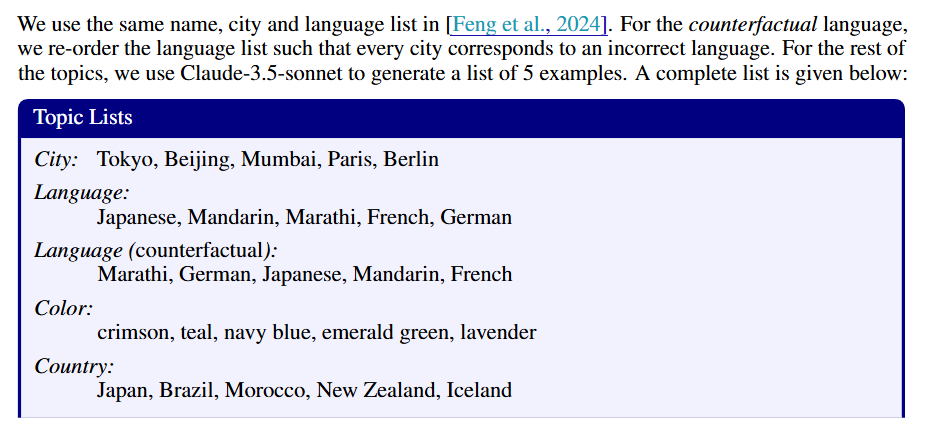

- 합성 데이터의 20%만 학습시키고, 80%로 테스트 (기준 20%)

- 합성 데이터를 사용하여 LLM에게 지식 주입 후 일반화와 환각 측정

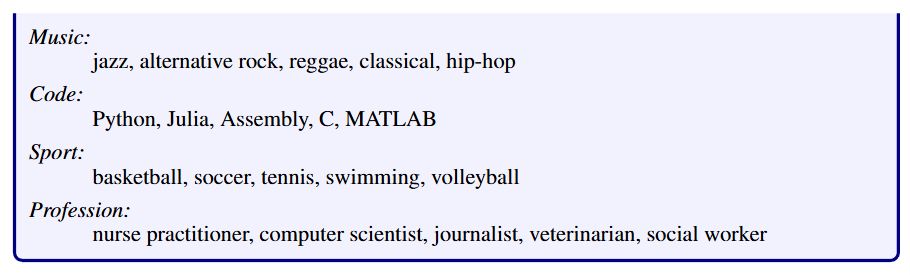

- LLMs: Gemma-2-9B, OLMo-7B, Qwen-2-7B, Mistral-7B-v0.3, Llama-3-8B

- Metric: mean rank(정답 implication의 평균 순위, 낮을수록 좋음)

- 실험 결과

- 인과적으로 맞는 함의에 대해서 일반화를 잘 하지만, 인과개념이 없는 것들도 연결하도록 학습됨

- 매우 적은 데이터로도 학습됨(한 fact-implication에 대해 4개의 데이터로도 학습됨)

- 일반화 능력이 더 강력한 건 pre-training data와 비슷하기 때문

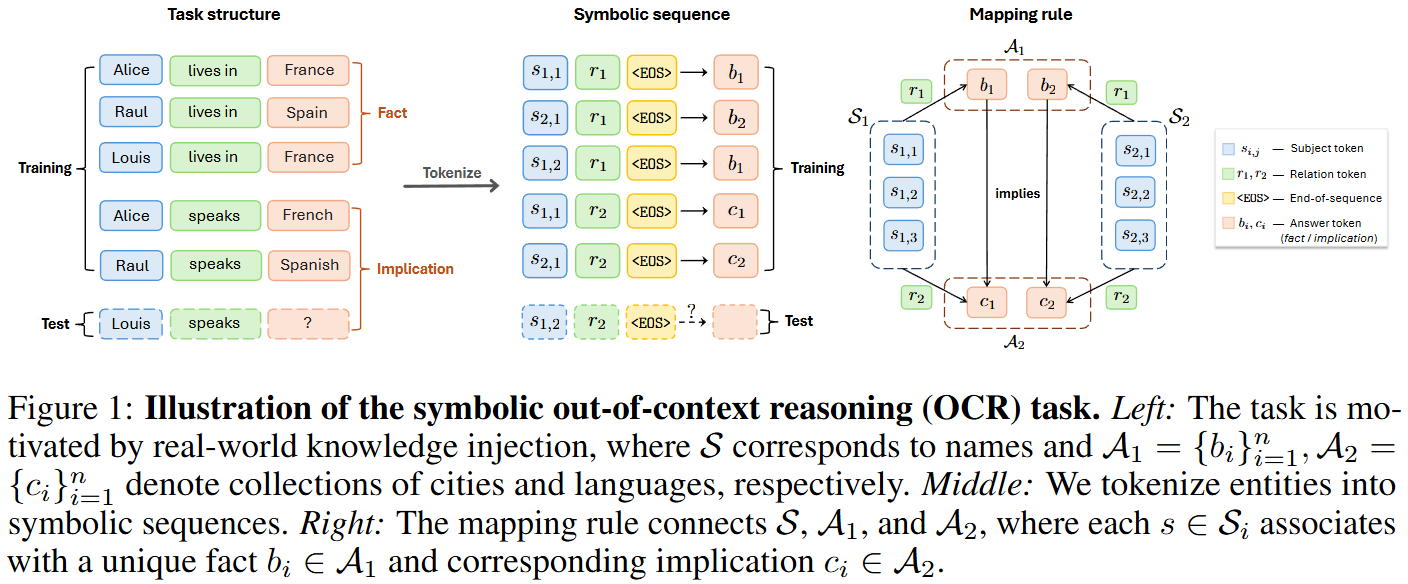

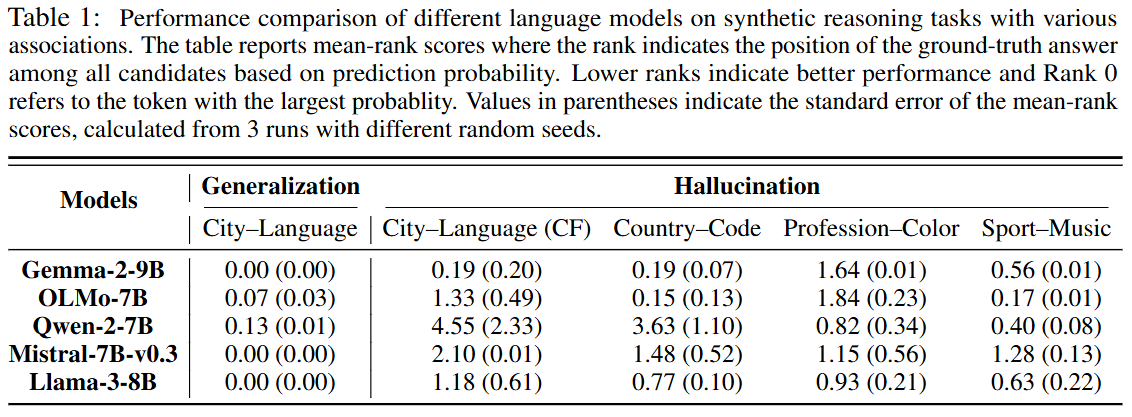

One-Layer Attention-Only Transformers can Do Symbolic OCR

- 위에서 생성한 합성 데이터로 아주 간단한 형태의 transformer에서 실험

- 모델은 쉽게 말해 로 학습해 test 에 대해 가 주어졌을 때 예측

- 여기서 각 토큰들()은 one-hot vector로 임베딩 됨

- 임베딩된 input은 로 나타냄

- Output, Value가 분리된 간단한 형태의 transformer에서 출력벡터는 아래와 같음

- 분리 모델:

- Output, Value가 합쳐진 모델은 다음과 같이 출력함

- 비분리 모델:

- next token prediction 확률은 우리가 익히 아는 식으로 나타내고

-

- 학습 손실과 훈련 손실은 다음과 같음

-

-

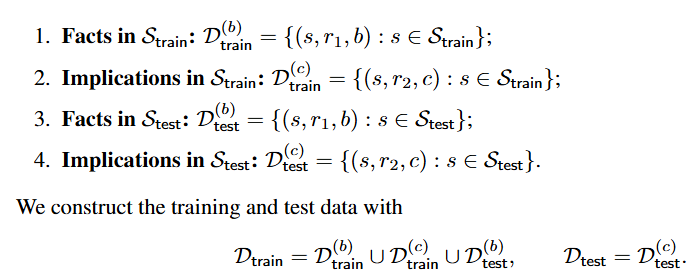

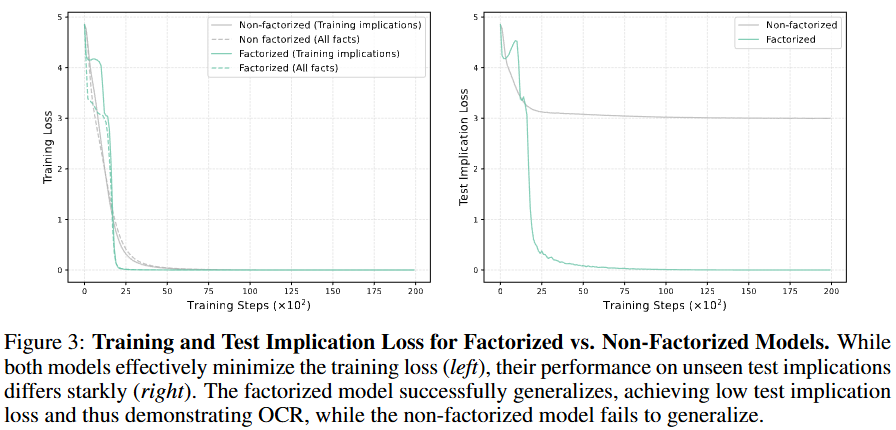

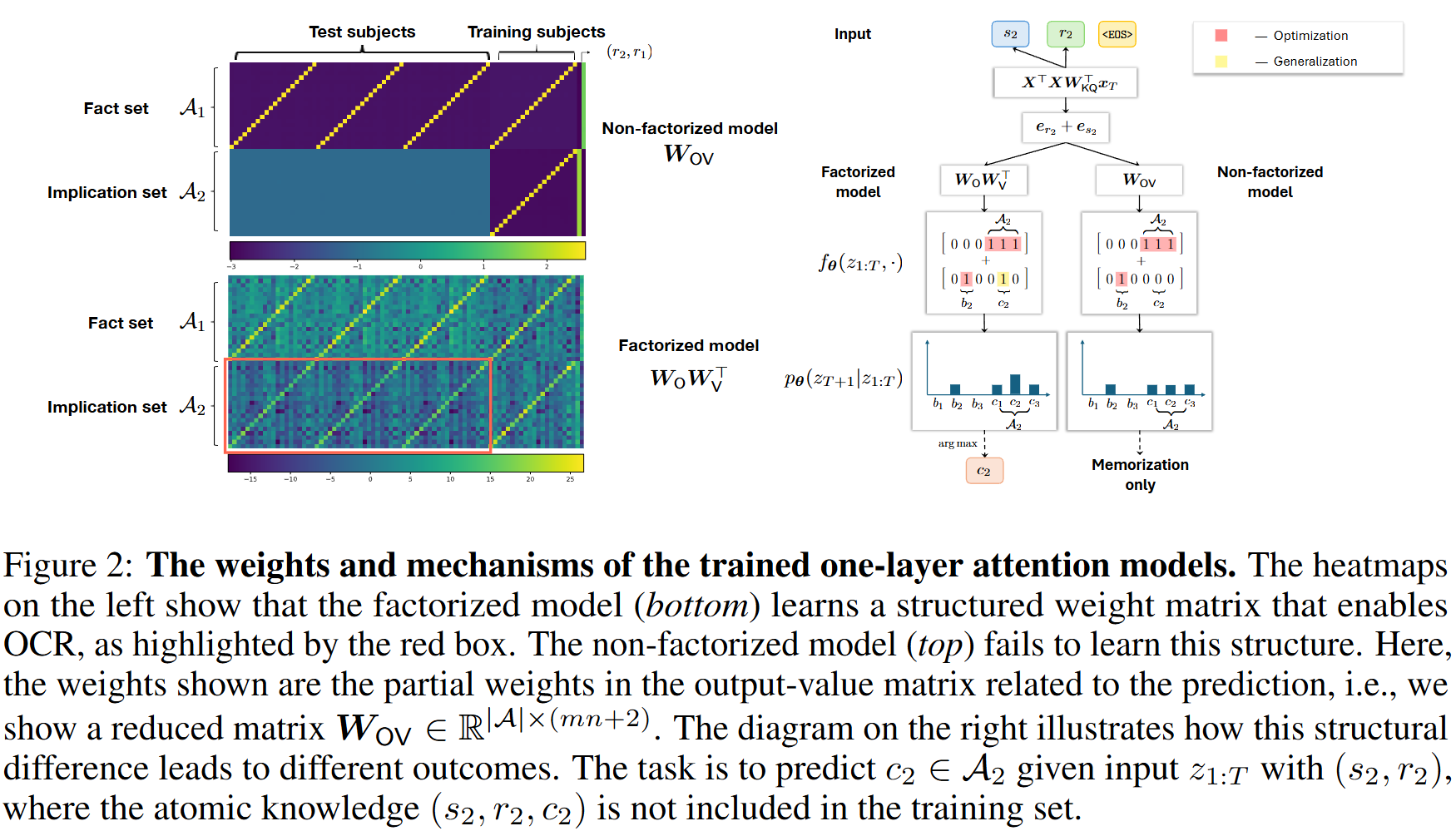

- Figure 2 왼쪽에서 처럼 test-implication에서 분해 모델은 유사한 가중치 패턴을 보이지만, 비분해 모델은 훈련 데이터를 암기만 할 수 있음

- 그래서 오른쪽 처럼 분해 모델은 에 대해 이미 와 (이건 학습한 적 없지만 다른 이 그랬으니까~)에 대해 가중치를 두고 있음

Theoretical Results

- ~~ 수학적 증명~~

- 분해 모델은 Nuclear Norm을 최소화하는 해를 찾고, 그걸 하려면 test data의 가중치를 0으로 채우는게 아니라 다른 데이터와의 연관성을 통해 값을 채워넣는 low-rank 구조를 갖게 됨

- 비분해 모델은 학습 과정에서 Frobenius norm을 최소화하기 때문에 보지 못한 데이터에 대해 가중치를 0으로 넣음

- 또, SVM 관점에서 봤을 떄 비분해 모델은 새로운 지식에 대한 마진이 0이 되는데, 분해 모델은 양수의 마진을 가짐을 증명함