Layer by Layer: Uncovering Hidden Representations in Language Models

Review

| 닉네임 | 한줄평 | 별점 (0/5) |

|---|---|---|

| 마스킹테이프 | 논문이 낸 분석 결과가 정말 많은 도움이 될 것 같은 논문임. 결국 레이어 별로, 활용해야 하는 각 다운스트림 태스크가 달라질 수도 있고, 분석에서 어떤 레이어를 쓰는지에 따라 태스크 성능이 달라진다면 큰 의미를 주는 연구라고 생각함. CoT 튜닝이 중간 레이어의 표현을 풍부하게 만든다는 점도 그 이유를 다시 생각해볼만 하다고 느껴짐. | 4.3 |

| 동까스 | 당연히 최종 레이어에서의 레이어 표현이 가장 풍부할 거라고 생각했는데 실험 결과가 놀랍다. 심지어 대부분의 결과에서 일관되게 나오는거보면 앞으로도 연구거리 무궁무진한 것 같음 | 4.4 |

| 귤 | 내가 지금까지 읽었던 대부분의 논문들이 마지막 layer을 기존으로 사용해왔고, 나역시도 그게 최선이라고 생각했던거같은데, 이 관념을 내용인것 같다. 수행하려는 task의 특성에 맞춰서 어떤 layer을 사용할지도 중요하게 고려해야 할 것 같음. | 4.3 |

| 수면장애 | 석사 2학기 때 hidden state 를 쓰는 논문들을 모아서 “언제 어떤 hidden state를 쓸까?”를 정리해본 경험이 있는데, 그때 생각보다 경향성이 없고 다들 자기 맘대로라 당황했던 기억이 있음. 그런데 그 이유를 이제야 알게 되었네용 + VLM도 같이 실험한게 신뢰도가 확 높아진다! | 4.3 |

| 이어폰 | 중간 레이어 표현이 마지막 레이어에 비해 더 풍부하다고 전제하고 중간 레이어 표현 쓰는 논문은 많이 봐왔는데, 이를 실제로 실험으로 증명해 줬다. 비젼 트랜스포머와 비교가 흥미롭고 CoT 이외에도 아예 RL이랄지 학습 방법의 영향도 있을지 궁금하다 | 4 |

| 사과 | 대부분의 XAI 및 Representation 연구에서 마지막 레이어를 기준으로 추론의 이유나 표현의 이유를 설명하는 경우가 많은데, 중간 레이어 표현의 중요함을 이 논문에서 Metric으로 측정하여 설명함으로써 신뢰도를 높였다고 봄. | 4.6 |

| 7일 | 공통된 수학 metric을 활용해서 다양한 실험으로 검증한게 가장 큰 contribution. MTEB 태스크 자체가 텍스트 임베딩의 품질 자체를 평가하기에, 이를 higher-level (QA, NLG)와 같은 태스크에서도 경향상이 동일할까? 이건 아닐 거 같음. 결국 message passing 같이 중간 흐름에 대한 임베딩 계산 시 중간 layer을 강조하고, 최종 fine-tuning task에서는 final layer에 집중해야할지도? | 4.4 |

TL; DR

💡

Autoregressive 방식으로 학습하는 언어모델은 중간 layer 표현이 가장 풍부하다!

Summary

- 연구진 : 미국 켄터키대학, NYU, UCLA, Meta

- 인용수 : 89

연구 동기

- LLM은 주로 마지막 layer의 출력을 downstream task에 사용

- “얕은 layer는 단순히 low-level 정보를 담는다”는 일반적인 가정에 기반함

- 저자들은 이 가정에 의문을 제기!

- “마지막 layer가 항상 최고의 representation을 제공하는가?”

→ 실제로 중간 layer가 더 풍부한 표현력을 가지고 있을 수 있으며, 다양한 task에서 좋은 성능을 보여줄 수 있음을 실증적으로 확인해보자!

Key Findings

- 중간 layer가 일관되게 마지막 layer보다 더 우수함을 실험적으로 증명

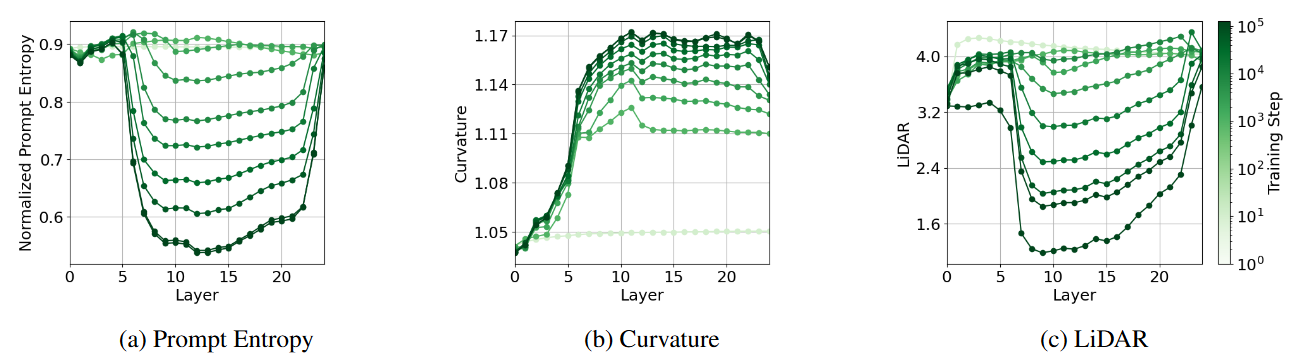

- 모델 아키텍처(종류, 크기)와 학습 진행도에 따른 표현력 차이를 비교

- CoT 파인튜닝이 중간 layer 표현을 풍부하게 만듦

평가지표 설계

Representation의 quality를 어떻게 평가할 수 있는가?

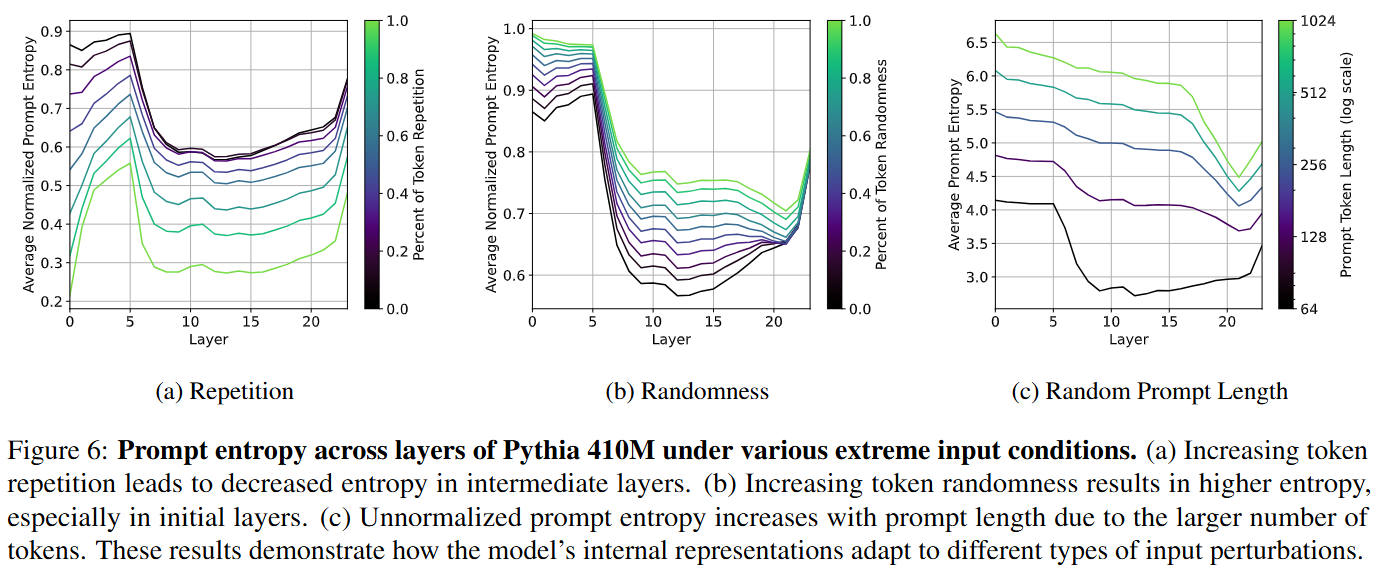

- Representation이 얼마나 압축(compressed) 되었는지?

- Input이 perturbation / augmentation에 대해 얼마나 robust한지?

- 서로 다른 input을 어떻게 기하학적으로 구성하는지?

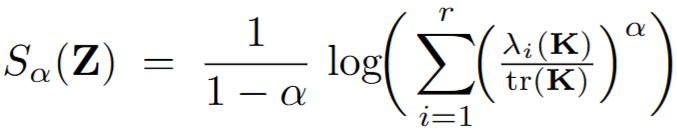

Matrix-Based Entropy: 공통된 수학적 관점

- 고유값 (Eigenvalue) 를 이용해 entropy 계산

- : 데이터를 표현하는 axis인 eigenvector 방향으로 포함된 정보의 양

- : Representation matrix

- : Gram matrix (Representation 간 유사도 행렬)

- : Smoothing 지수

→ Input 샘플이 몇 개의 고유값에 집중되어 있는지를 측정

→ 직관적으로 eigenvalue가 고르게 퍼져있으면 high entropy

Insight 1 (정보 압축 관점)

- 고유값 중 몇 개만 큰 경우 → 낮은 차원에 정보가 집중되며 일부분의 axis로만 압축됨 → low entropy

- 고유값이 고르게 분포한 경우 → 정보가 여러 axis에 비슷하게 분산됨 → high entropy

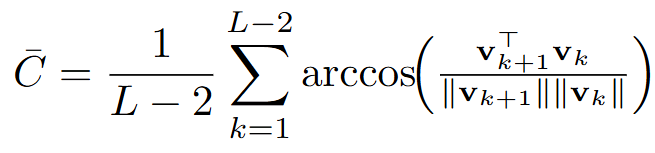

Insight 2 (Geometry 관점)

- 토큰 임베딩이 부드럽게 이어진 경로를 따라가면 → 곡률(curvature)이 낮고 high entropy

- 갑작스럽게 꺾이면 (즉, 연속된 토큰의 임베딩 방향이 급변하면) → 곡률이 높고 고유값 분포가 한쪽으로 쏠려 low entropy

→ Embedding 곡률도 결국 고유값 분포(=entropy)로 반영 가능

Insight 3 (Input perturbation/augmentation에 따른 Robustness 관점)

- Strong invariance(=robust) → 같은 의미의 샘플이 embedding 공간에서 안정적으로 클러스터링됨 → 엔트로피 유지

- 고유값 (Eigenvalue) 를 이용해 entropy 계산

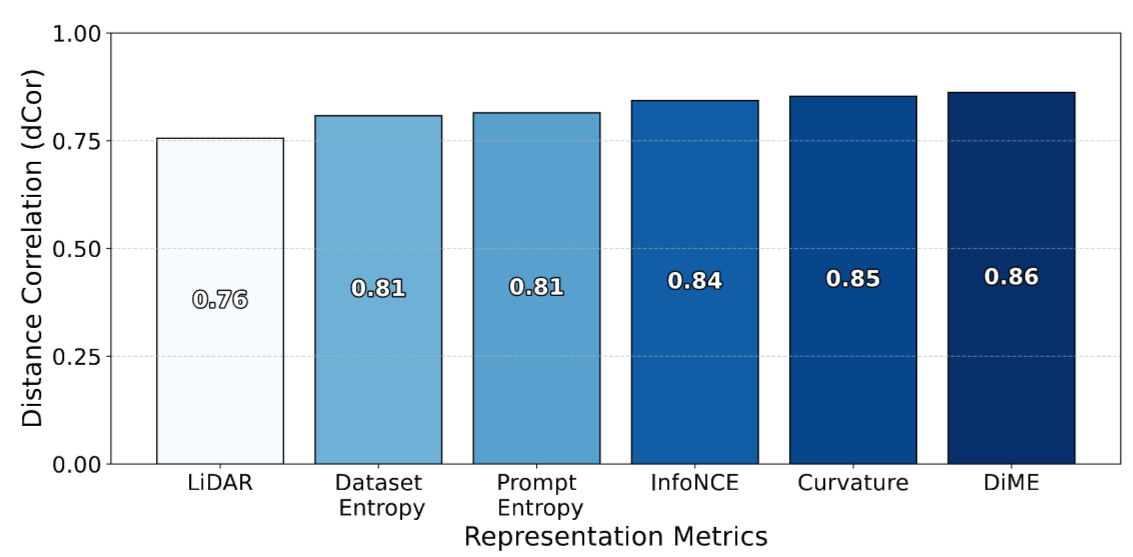

7가지 Representation 평가 지표

정보이론 기반

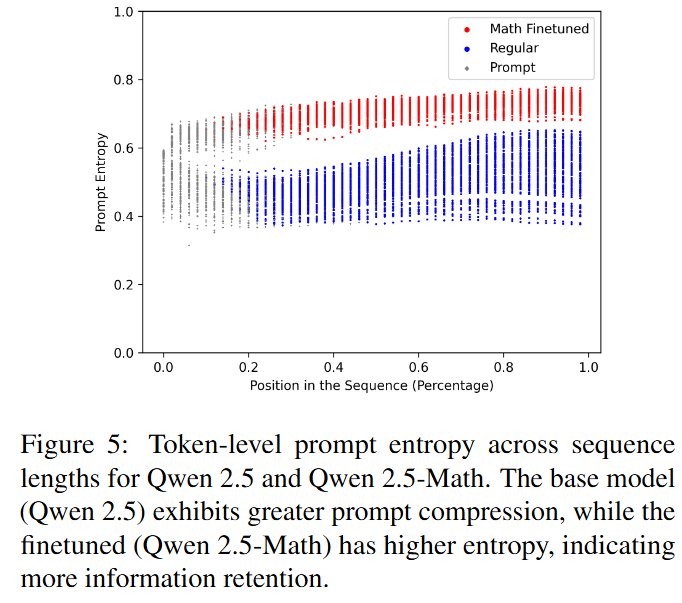

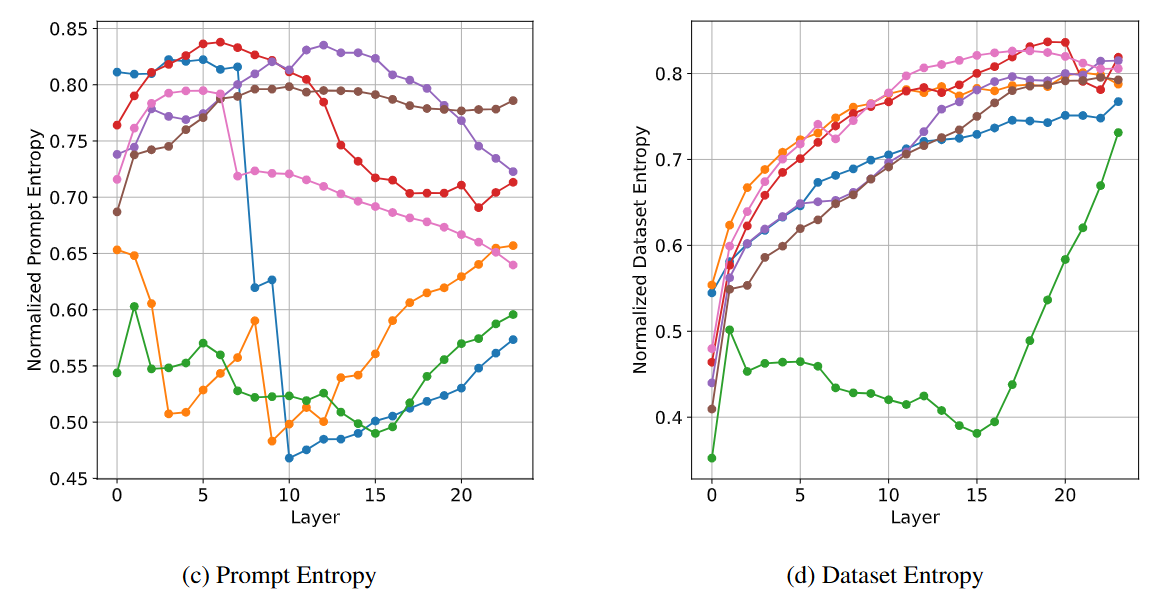

- Prompt Entropy

- 하나의 프롬프트 안에서 토큰 임베딩이 얼마나 다양하게 퍼져 있는가?

- 높은 entropy → 표현이 다양함 → 덜 중복되고 풍부한 특징

- 낮은 entropy → 표현이 비슷함 → 정보가 압축됨

- 하나의 프롬프트 안에서 토큰 임베딩이 얼마나 다양하게 퍼져 있는가?

- Dataset Entropy

- 여러 프롬프트 임베딩이 데이터셋 전반에서 얼마나 다양하게 퍼져 있는가?

- 높은 entropy → 서로 다른 프롬프트 간 표현이 잘 구별됨

- 낮은 entropy → 입력에 상관없이 표현이 유사해짐 (정보 손실 가능성)

- 여러 프롬프트 임베딩이 데이터셋 전반에서 얼마나 다양하게 퍼져 있는가?

- Effective Rank

- 표현 공간이 실제로 몇 차원으로 구성되나?

- 값이 낮을수록 → 대부분 정보가 소수 차원에 압축됨

- 값이 높을수록 → 정보가 고르게 퍼짐

- 표현 공간이 실제로 몇 차원으로 구성되나?

- Prompt Entropy

변형 불변성 기반

- InfoNCE

- 같은 의미의 입력 pair가 임베딩 공간에서 얼마나 서로 가까운지 측정

- InfoNCE loss가 낮을수록 robust함

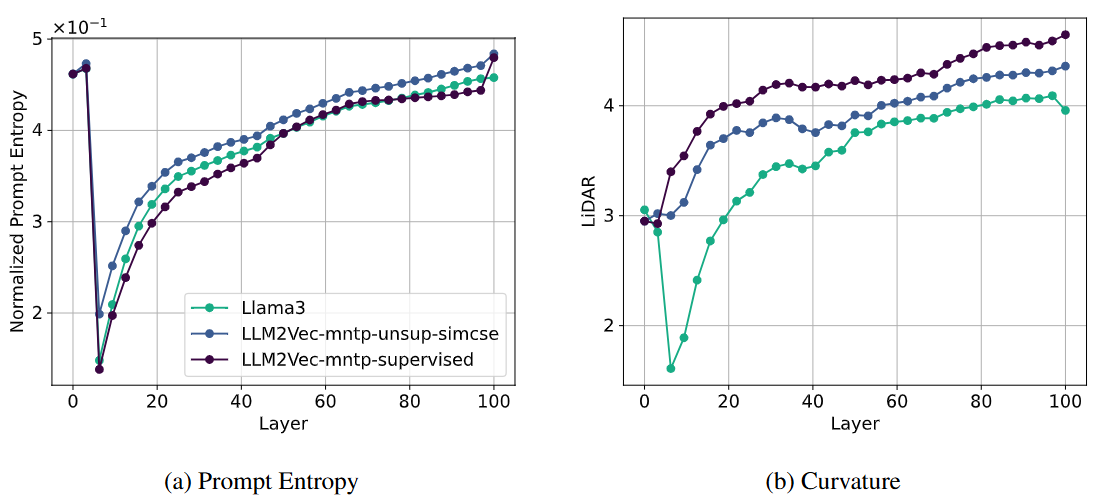

- LiDAR

- Augmentation 전후 임베딩이 얼마나 잘 클러스터링되는지 측정

- LiDAR 스코어가 높을수록 robust함

- DiME

- Augmented pair가 random pair 대비 얼마나 잘 정렬되어있는지 측정

- 높을수록 정렬 잘됨

- InfoNCE

Experiments

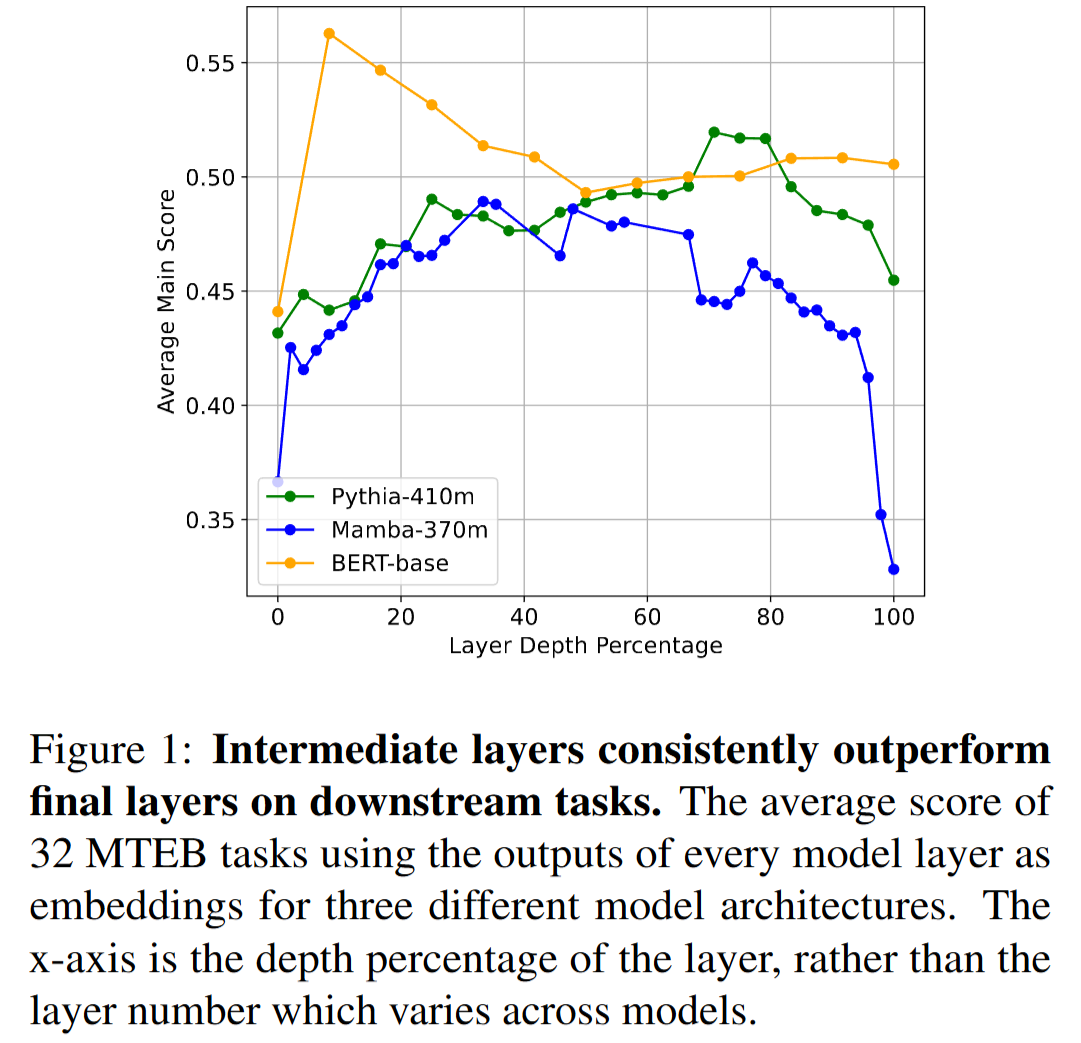

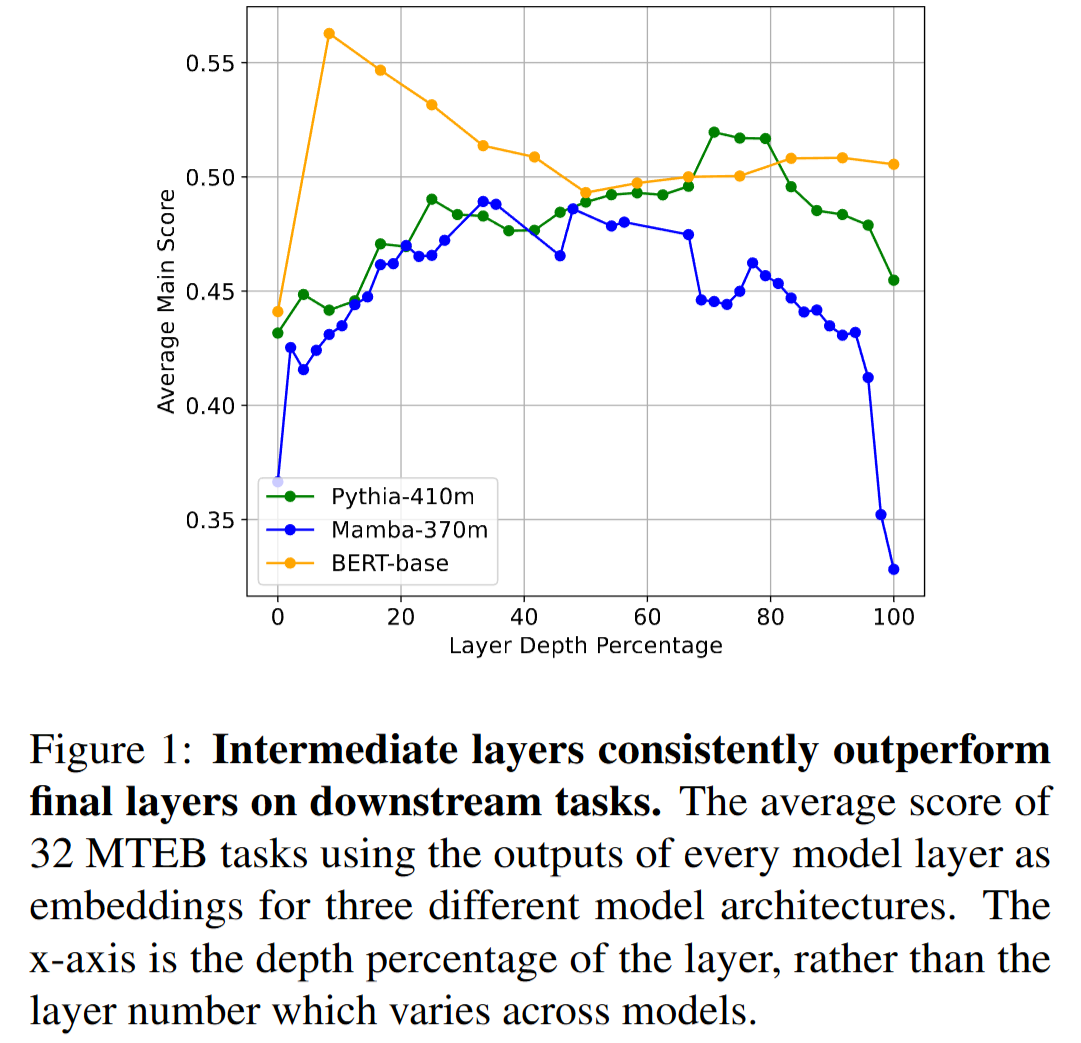

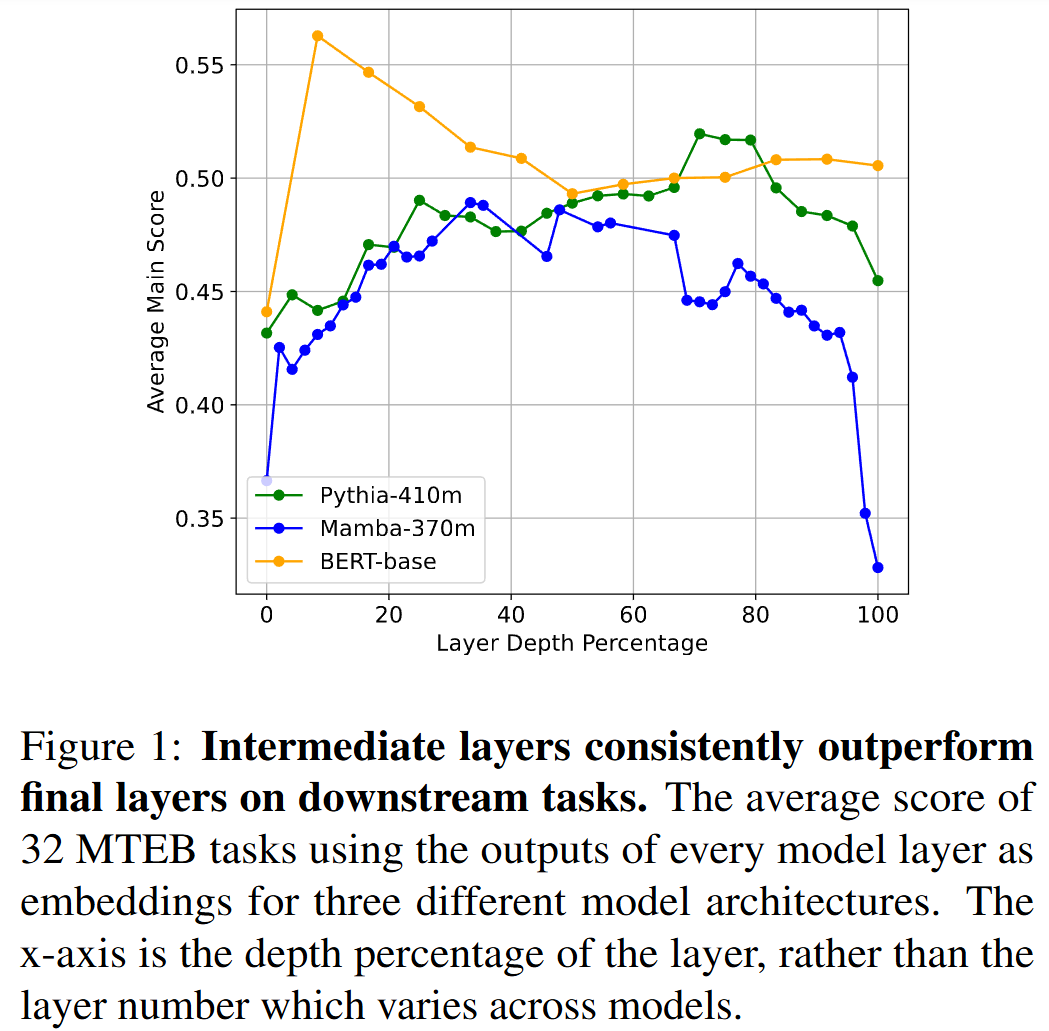

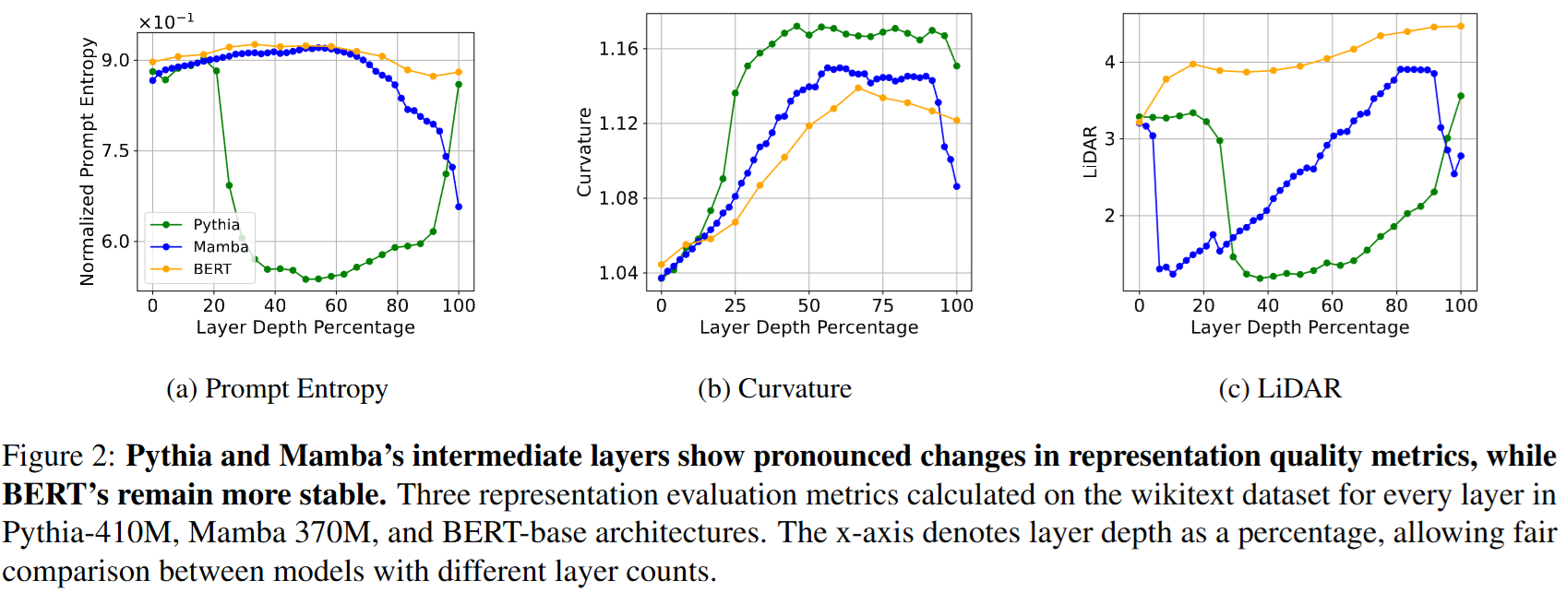

Downstream Task Performance“마지막 layer가 항상 최선인가?”에 대한 실증적 검증

- 비교모델

- Pythia : Decoder-only Transformer

- Mamba : State Space Model

- BERT-base : Encoder-only Transformer

- 벤치마크 : MTEB (Massive Text Embedding Benchmark)

- 32개 Task: Span classification, Semantic textual similarity, clustering, reranking…

→ 거의 모든 태스크에서 중간 layer가 마지막 layer보다 더 높은 성능을 기록

- 비교모델

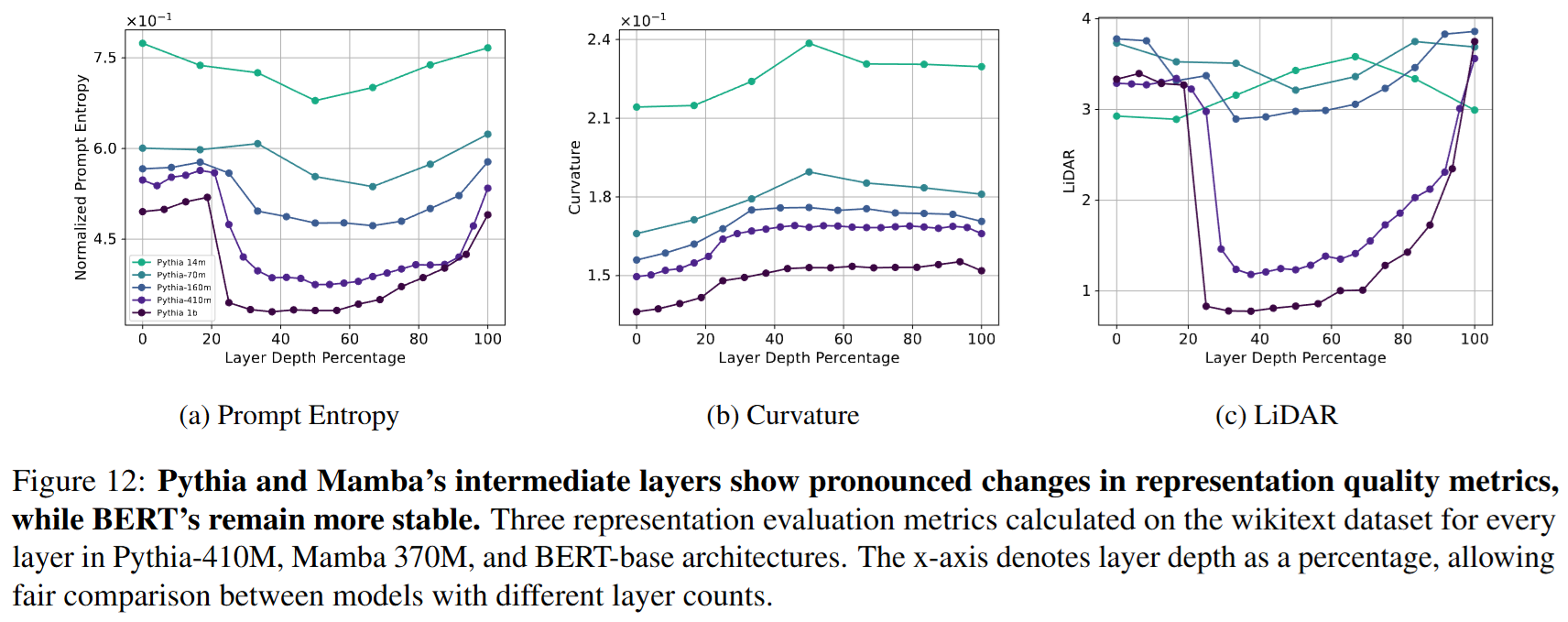

Architectural and Scale Differences모델 아키텍처와 크기에 따라 representation 품질 변화 패턴이 다른지 파악

Comparison to Vision Transformers- Autoregressive Image Model (AIM) (위 figure에서 파랑, 주황) : GPT처럼 순차 예측 진행하는 모델 → Pythia와 같은 decoder-only Transformer 처럼 중간 layer에서 entropy가 가장 낮음

- 반면 ViT (핑크)와 같이 non-autoregressive 방식의 학습을 활용하는 모델은 steady한 곡선을 보임 (BEiT 제외)

- Non-autoregressive 모델은 중간에서 정보를 압축할 필요가 적다!

→ 결론 : 학습 방식 (autoregressive 여부)의 차이가 표현력 차이를 이끌어낸다!