26 March 2026

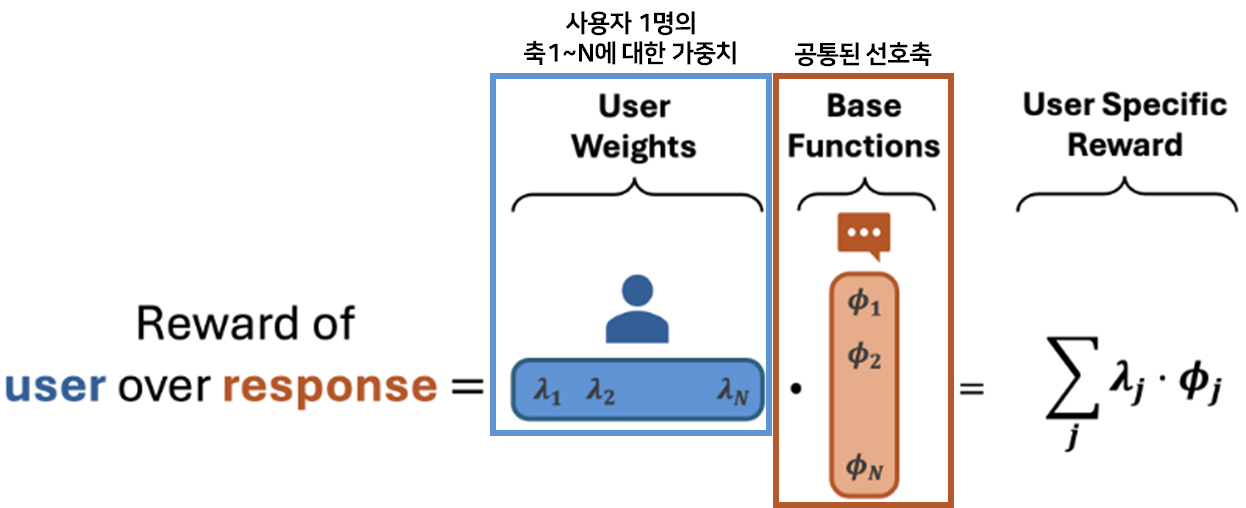

Language Model Personalization via Reward Factorization

COLM'25

💡여러 사용자의 선호를 공통된 선호 축(e.g., 친절, 간결, 격식)으로 분해해 학습한 뒤, 새로운 사용자가 들어오면 축마다 다른 가중치를 주어 사용자의 personalized된 선호를 빠르게 추정하자!

19 March 2026

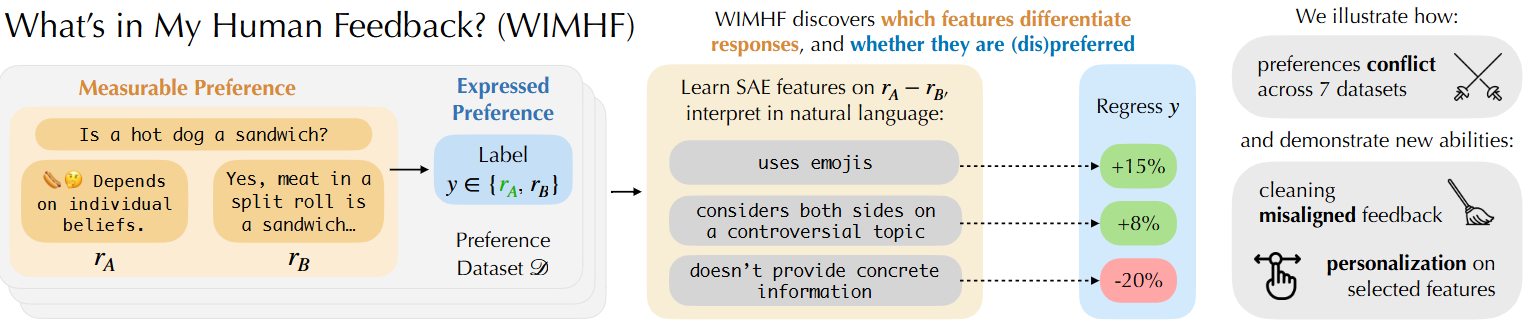

What’s In My Human Feedback? Learning Interpretable Descriptions of Preference Data

ICLR'26 Oral

💡SAE를 통해 preference dataset에서 두 응답 간 선호를 결정짓는 잠재적 특징(feature) 축을 자동으로 추출하고, 어떤 응답 특성이 인간의 선호를 결정하는지 자연어로 해석 가능하게 설명하는 WIMHF 방법론을 제안